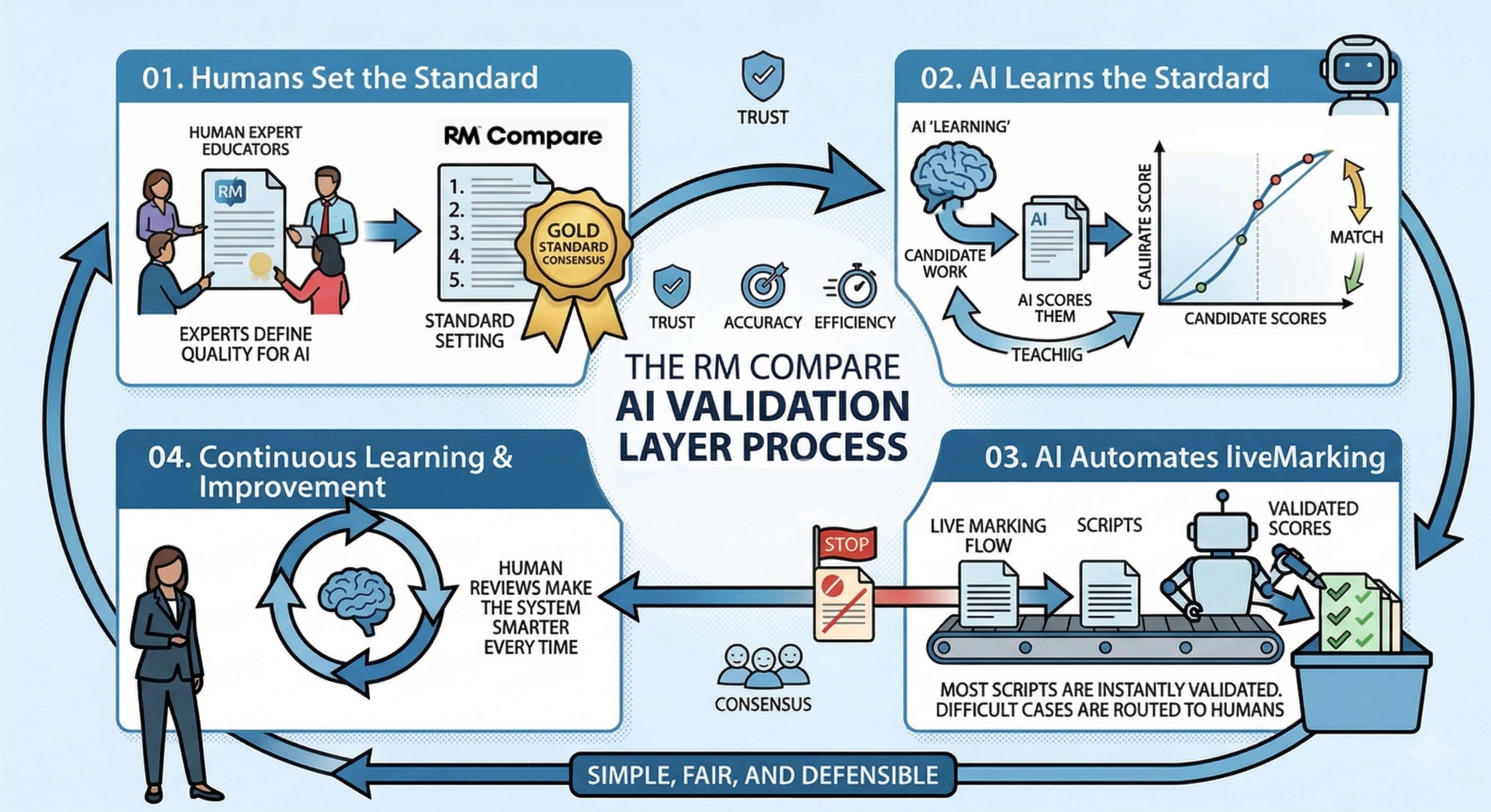

AI Validation

Introducing the RM Compare

AI Validation Layer

Bridging the gap between AI speed and human expertise. Establish the "Ground Truth" needed to calibrate and trust automated assessment systems.

0.9+

Reliability

Proven human-AI alignmentAudit

Ready Data

Defensible logic for regulatorsHuman

In the Loop

Calibrate AI with expert standardGovernance & Compliance

Defensible AI Decision Making

In a world of AI regulation, "because the algorithm said so" is not enough. Move beyond spreadsheets to a robust, auditable awarding process.

🛡️ Protect Against Challenge

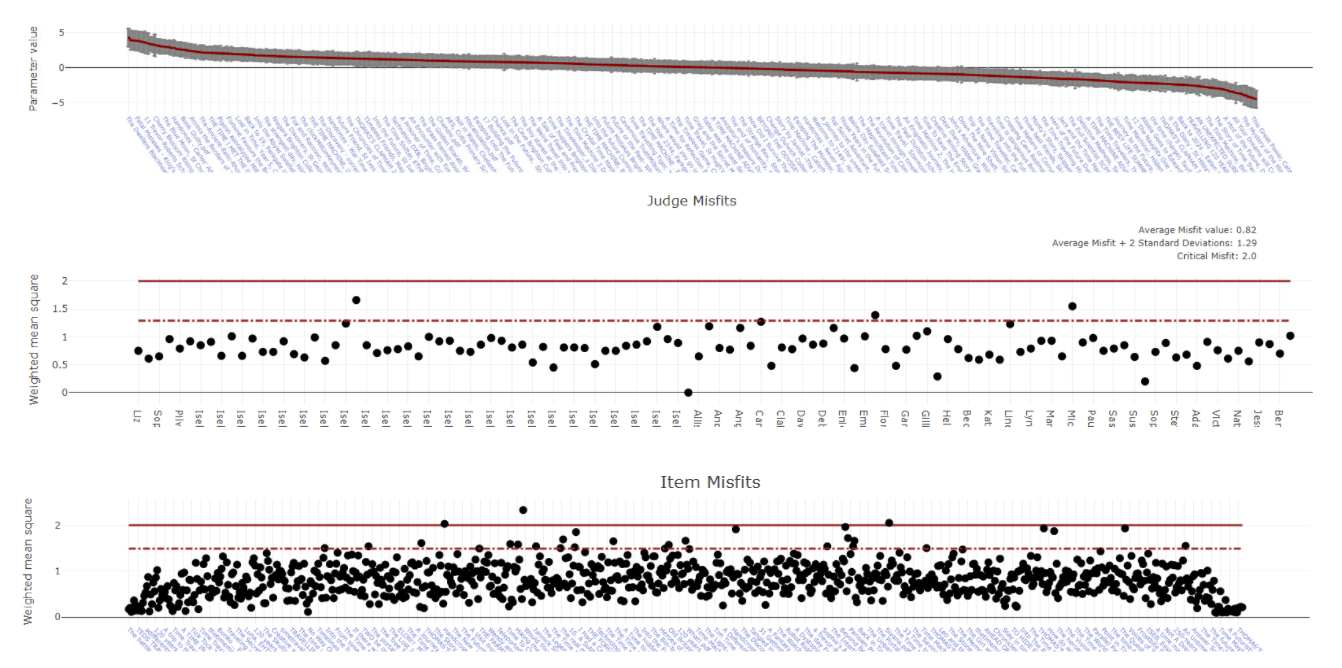

Provide evidence that your AI is calibrated against a democratic, multi-expert human standard. Every decision is backed by statistical reliability data.

⚖️ Identify & Mitigate Bias

Automatically highlight "misfits" where human and machine disagree. Spot model drift before it impacts high-stakes outcomes.

Works where AI alone fails

The gold standard for subjective, complex, and human-centric verification.

"RM Compare provides the missing link in AI assessment: the ability to verify automated outputs against the collective wisdom of our best human experts."

Assessment Lead, RM Compare