- Product

From pilots to products: how organisations can modernise without blowing everything up

Every exam body I talk to feels the same squeeze. Governments want innovation. Schools want recognition for richer work. Generative AI is crashing into the system from all sides. And yet, when results day comes, the only thing that really matters is whether the grades stand up in the media and in court.

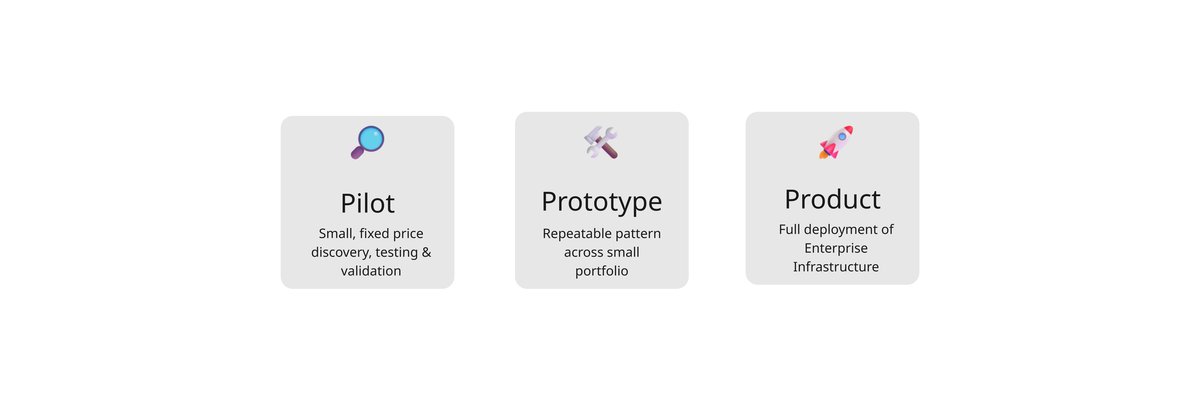

With RM Compare, we’ve learned that there is a slower, safer and ultimately more powerful path: build a human‑centred judgement infrastructure first, then let AI work around it. The way to get there isn’t through one huge transformation programme. It’s through a series of deliberately small, well‑designed steps: Pilot → Prototype → Product.

Step 1: Pilot – learn fast, touch lightly

A pilot is where we both get to ask, “Is this even a good idea for your context?”—without anyone pretending it’s already business as usual.

That might mean taking a single component—say, a coursework project or a practical—and running a comparative judgement session alongside the existing marking approach. We’re not trying to re‑engineer their entire system. We’re trying to learn.

In a good pilot, questions are more important than features. Do judges reach more stable decisions when they compare work instead of scoring it? Do standards become easier to articulate and share? Does this give the exam body a story they could explain to teachers, unions and the public?

Technically, the rule is simple: use the platform as it is. We rely on the existing 💻|Studio, 🔗|Hub and 📳|Live tools, and the APIs we’ve already committed to. If we hit a limitation, we don’t hack around it for just one customer; we capture it as product feedback. The pilot earns the right to influence the roadmap, not to fork it.

Step 2: Prototype – prove a pattern, not a one‑off

If a pilot shows promise, the next question is: can this become a repeatable part of how you work?

That’s where the prototype comes in. Here we’re not just asking “does ACJ work on this component?” but “is there a pattern we can use again and again across your portfolio?”

The prototype might take the same comparative judgement workflow and apply it to several components across different subjects. Or it might explore how standards created in RM Compare flow into existing on‑screen marking systems, so that examiner training and monitoring are anchored in real student work. Or it might be a full exam‑series AI validation study, where we check an automated marker against a human judgement standard.

The important thing is that what we do at this stage is describable. We should be able to write it down as a configuration template, a short set of API calls, a playbook that a local team can follow. If the only way to repeat it is to fly the original project team back in and reinvent everything, then we haven’t built a prototype. We’ve just done a bigger pilot.

For the exam body, this phase is about confidence: confidence that they can run this themselves, that it fits their operational and political reality, and that it doesn’t require burning down what already works.

Step 3: Product – treating judgement as infrastructure

Only once a pattern has survived contact with real life do we talk about products in the full sense: contracts, capacity, multi‑year commitments.

At this point, RM Compare stops being “something we used in a project once” and becomes part of the assessment infrastructure. It sits alongside item banking, on‑screen marking and other functions as a standard tool: the place where human judgement is captured, aggregated and governed at scale.

Practically, that might look like an enterprise licence rather than a tangle of one‑off fees. It might look like annual judgement‑capacity bands instead of per‑component deals, so the organisation is free to move components in and out as needs change. It might include a standing option to run AI validation studies whenever they roll out or update automated marking.

More important than the commercial detail is the shift in ownership. The exam body’s own people, and their local partners, own the “final mile”: choosing where to use comparative judgement, running sessions, interpreting outputs. Our job becomes keeping the platform coherent, predictable and well‑documented, so it can support many different stories without having to be rewritten for each one.

Why this path suits high‑stakes assessment

It aligns with how they already manage risk. Research, then pilot, then phased implementation is baked into their culture. A Pilot → Prototype → Product path simply gives that rhythm clearer stages and names in the context of comparative judgement and AI.

It keeps humans at the centre. Comparative judgement uses human experts to define and maintain standards. Those standards can then be used to train examiners, to audit consistency and, if desired, to test whether AI systems are behaving. The infrastructure exists to support human judgement, not to bypass it.

And it preserves optionality. At any point, the organisation can decide that the world isn’t ready, or that other priorities have overtaken this one, and step off the path without having bet the system on a single all‑or‑nothing change.

For us, it also guards the integrity of RM Compare as a product. Instead of vanishing into a few custom national implementations, the platform stays global, with a roadmap that serves all of its users.

Growing together, not bolting on

We’re reflecting this approach in how we talk about RM Compare publicly. Rather than presenting a grab‑bag of services, we’re starting to describe a relationship that can grow in clear stages: a pilot to explore the fit, a prototype to prove a pattern, then a product that becomes part of the exam body’s core infrastructure.

If that’s you, the invitation is straightforward: start small, with one component and a clear question. Use the pilot to learn. Use the prototype to prove a pattern. Then, when you are ready, turn judgement into the infrastructure that everything else can lean on.