- Opinion

Reimagining Assessment in an AI World: How the RM Compare Ecosystem Supports Global Insights.

The International Summit on the Teaching Profession took place in Tallin, Estonia last week. The Summit was titled 'Switching Gears: Teachers and Learners in the Future Learning Environment'. The themes were reflected in an OECD report (Re-imagining Teaching in an Accelerating World) released shortly before the summit based on data from the 2024 TALIS survey into the state of teaching. This is the world’s largest survey of teachers and principals. In 2024, 280 000 educators from 55 education systems shared insights into their working conditions, professional development and the realities of the modern classroom.

The latest OECD Reimagining Teaching in an Accelerating World report makes one thing very clear: teaching and assessment can’t stay as they are. As AI reshapes what students can do with a few prompts, the things that are easiest to test have become the easiest to automate. Systems everywhere are being pushed to value richer learning, trust professionals more, and treat AI as an educational ally rather than a threat.

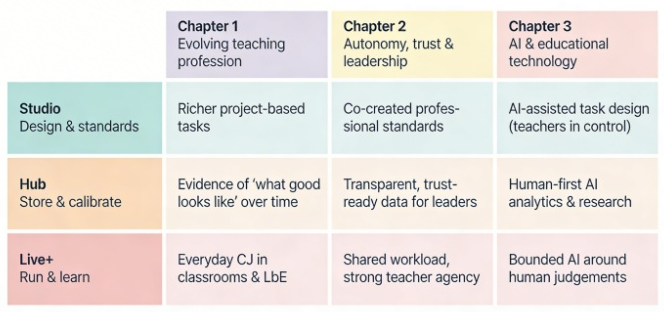

To organise that challenge, the report uses three themes:

- The evolving teaching profession

- Autonomy, trust and leadership

- AI and educational technology as an ally, not a replacement.

The RM Compare ecosystem – 💻Studio | 🔗Hub | 📳Live – was not designed around this report, but it happens to line up almost perfectly with it.

1. Supporting an evolving teaching profession

The report asks systems to move beyond narrow, recall‑focused exams towards project‑based, interdisciplinary, and socially rich learning experiences. It also highlights the growing importance of social‑emotional skills, collaboration and students’ ability to navigate complexity. That kind of learning is hard to capture in traditional tasks and even harder to mark reliably with a rubric.

This is where 💻|Studio comes in. It gives educators a place to design richer project‑based tasks and co‑create exemplars of “what good looks like” in those tasks. Instead of trying to squeeze complex work into a checklist, teachers and experts can use comparative judgement to surface real examples of strong, mid‑range and emerging performance. The “Richer project‑based tasks” cell in the matrix is not just a slogan; it’s an invitation to treat assessment design itself as a professional learning activity.

Once those exemplars and rank orders exist, 🔗|Hub stores and organises them as evidence of “what good looks like” over time. A department, a university faculty, or a national body can build up a living library of student work and standards, rather than re‑inventing everything for each cohort. This speaks directly to the report’s description of teaching as a long‑term, evolving profession where standards and expectations need to grow cumulatively, not reset every year.

Finally, 📳|Live brings comparative judgement into everyday classrooms and programmes as Learning‑by‑Evaluating. Students and teachers can run short judging sessions on drafts, projects or performances. That turns assessment into a regular part of feedback, mentoring and metacognition rather than a single high‑stakes event. It also supports the report’s emphasis on helping students think for themselves, develop evaluative judgement, and see quality in each other’s work.

2. Turning autonomy into trust and leadership

Across systems, the report sees the same tension: policymakers want more professional autonomy, but they also need assurance that students are treated fairly and standards are coherent. Teaching is “becoming more of a team sport”, with collaboration, mentoring and shared practice at the centre.

In Rm Compare 💻| Studio rather than receiving rubrics designed elsewhere, teachers and subject experts work together in Studio to define and refine standards from real student work. Comparative judgement helps groups converge on shared views of quality while keeping space for professional debate. This strengthens teacher voice and identity: standards are something you help create, not just something you implement.

In 🔗|Hub, those decisions are turned into “Transparent, trust‑ready data for leaders”. Leaders can see how consistently different groups of judges apply standards, where there is legitimate diversity of judgement, and how performance shifts over time. Instead of relying on a single score, they have a richer picture of quality that fits the report’s call for trust‑based leadership, not compliance‑driven oversight.

📳|Live then spreads the work and responsibility across many professionals. The matrix captures this as “Shared workload, strong teacher agency”. A large set of judgements can be distributed across teachers (and, in some designs, students), but the system still foregrounds human decision‑making. Teachers remain the ones comparing, deciding and reflecting, with Live+ simply orchestrating the flow of pairs and capturing the evidence. That aligns well with the report’s finding that teacher satisfaction is tied less to pay alone and more to meaningful professional work, collaboration and a sense of agency.

3. Keeping humans at the centre of AI‑era assessment

The third theme is the one that has grabbed headlines: AI and educational technology. The report is explicit that general‑purpose GenAI tools are now part of education’s “lived reality”, but also that they must not simply do the thinking for students or take assessment out of teachers’ hands. It calls for human‑centred teaching, learning and assessment with GenAI, strong governance, and “humans in the loop” at all times.

The report points to a very pragmatic stance: let AI help with drafting prompts, variants and descriptors, but keep teachers as curators and final decision‑makers. 💻| Studio is a natural home for this: it’s where educators can see, edit and approve any AI‑suggested text in the context of real student work and shared standards, embodying the human‑in‑the‑loop principle.

The report urges systems to invest in GenAI‑related educational R&D and to build trustworthy data infrastructures. 🔗| Hub can support that by providing a controlled environment where AI is used to surface patterns (for example, typical features of borderline work, or inequities in task functioning) while humans decide what those patterns mean and how policy or pedagogy should respond.

AI can support things like drafting feedback suggestions, triaging which scripts might need a second look, or flagging inconsistencies for review. But the core act – deciding which piece of work is stronger in a comparison – remains human. That is exactly the kind of design the report has in mind when it talks about making AI an educational ally that amplifies human judgement rather than replacing it. RM Compare 📳| Live is built to help here.

An invitation to any system

The pressures they describe – richer learning, more autonomy, trustworthy AI – are shared across systems in all countries right now. The RM Compare ecosystem offers a practical pattern any ministry, agency, exam board or university can adapt:

- Use Studio to design richer tasks and co‑create standards.

- Use Hub to store and calibrate evidence over time.

- Use Live+ to run judgement where learning actually happens, with AI firmly in the role of assistant, not judge.

You don’t have to start big. A small pilot – one project‑based task, one subject area, one cohort – is enough to test how this pattern works in your own context. If the OECD report is the compass, the RM Compare ecosystem is one way of turning that direction into day‑to‑day reality.