- Opinion

The Human Skill That Still Eludes AI – And Why Assessment Needs Ground Truth

AI is arriving in education as if it were a cure‑all for workload and consistency. Sales decks promise tools that “judge writing like teachers”, “skip marking altogether”, and “cut workload by 90 per cent”. It is an attractive story in a system under pressure.

But if we listen carefully to the people building these systems – and to the artists responding to them – a different story emerges. The core human skill that good work depends on is still beyond today’s models. If we ignore that, we risk building assessment systems that are perfectly aligned to the wrong thing.

"Thanks for the song, but with all the love and respect in the world, this song is bullshit, a grotesque mockery of what it is to be human, and, well, I don’t much like it." Nick Cave, Red Hand Files 2023

What is the problem?

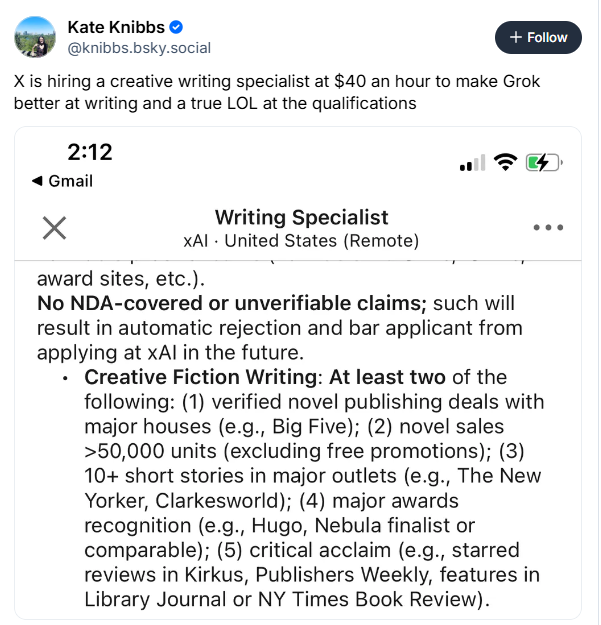

In “The Human Skill That Eludes AI” published in the Atlantic (March 17th 2026) Jasmine Sun talks to AI leaders who are surprisingly candid. For all the progress, they have not yet built a model that can write well in the fully human sense. These systems are extraordinary pattern‑matchers. They digest vast archives of language and can generate fluent paragraphs on almost any topic. They are brilliant at looking like good writing. What they do not do is decide what is worth saying, take responsibility for a viewpoint, or feel the cost of being wrong.

When we praise a piece of writing in the real world, we almost never mean “grammatical and on topic”. We are usually responding to something deeper: judgement, originality, a sense that someone is thinking aloud and taking risks on the page. Those qualities are not extracted from a dataset. They are the result of years of practice, feedback and experience.

Nick Cave makes the same point from a songwriter’s perspective. In 2023, a fan sent him lyrics “in the style of Nick Cave” written by an AI. His response was merciless. He called the song “replication as travesty”: a collage of his favourite images that had not been earned. Data, he said, “doesn’t suffer”. An AI model has no grief, no faith, no failure to draw on. It can only reshuffle traces of other people’s experiences into new configurations. However convincing the style, the song is an empty costume, not a soul.

Put Jasmine Sun and Nick Cave together and you get a simple, awkward conclusion. AI is rapidly becoming excellent at imitating the surface of good work while remaining blind to much of what makes that work genuinely human.

"What I learned is that modern LLMs are built in a way that is antagonistic to great writing; they are engineered to be rule-following teacher’s pets that always have the right answer in hand" - Jasmine Sun, Atlantic

Why is this a problem in education assessment?

This would already be an interesting cultural moment if it stopped at essays and songs. It does not. In education, AI assessment and feedback tools are being marketed hard. They promise to evaluate writing, short answers and extended tasks in seconds, aligning neatly to existing rubrics and grade scales. For over‑stretched teachers, it sounds irresistible.

There are two deep problems if we take the Atlantic and Nick Cave seriously.

The first is construct drift. Most AI marking systems are trained to hit rubric descriptors: structure, vocabulary, coherence, task fulfilment. That is not a criticism; it is how they are designed. The danger is that, over time, “good writing” in our systems quietly becomes “whatever the model can reliably recognise and reward”. Voice, originality, insight and deep understanding are harder to specify and harder to model, so they get squeezed to the margins. The construct bends to fit the tool.

The second is that performance starts to masquerade as learning. Generative tools now make it easy for students to produce polished writing on demand. With a few prompts, they can generate a fluent, well‑structured answer that looks like strong work. If we then feed that work into AI markers tuned to the same surface features, we risk certifying the performance of the tool rather than the capability of the learner. The Atlantic piece describes exactly that pattern: AI fills the page with plausible prose that has not required the slow human labour of learning to write. Cave’s phrase, “replication as travesty”, is exactly the failure mode we should fear.

The problem is not confined to writing. Across disciplines, we are beginning to see AI generate designs, code, lab reports, presentations, even pieces of art that look impressive. What still eludes it is the slow, situated human judgement underneath: deciding which problems are worth solving, what trade‑offs to make, how to reason ethically, how to communicate to a particular audience in a particular context. Those are precisely the things education claims to care about.

What happens if we do nothing and let AI drive?

If we simply bolt AI assessment onto existing systems and chase efficiency, the trajectory is predictable.

We will reward conformity over originality. Models are optimised around central tendencies: the typical responses in their training data. Unusual, risky or voice‑driven work becomes harder to recognise and easier to mis‑score. Students quickly learn to optimise for what the machine likes, not for authentic communication or deep thought.

We will inflate apparent gains while hollowing out learning. Students who lean heavily on AI tools can present fluent, tidy work while skipping the difficult cognitive work of planning, drafting, revising and reflecting. When both production and marking are mediated by AI, assessment starts to measure a student’s prompt‑craft and tool‑use more than their understanding.

We will lock ourselves into yesterday’s standards. Rubrics and grade descriptors were designed in a pre‑AI world. If we now treat them as fixed and train models hard against them, we risk entrenching a narrow, surface‑heavy notion of quality at the very moment we should be re‑examining it.

And we will invite a trust backlash. As regulators are already warning, high‑stakes uses of AI demand clear evidence and strong human oversight. If systems rush to hand complex judgements over to opaque models and later evidence shows systematic misjudgements of certain kinds of students or work, trust in both AI and assessment will suffer.

Left alone, the system drifts towards an education model optimised for work that looks good and marking that runs fast – exactly the combination the Atlantic article and Nick Cave warn against.

The methodology described below by Jasmine Sun in her Atlantic article is NOT the answer!!

What is not the answer?

One tempting response is the idea of AI as co‑judge.

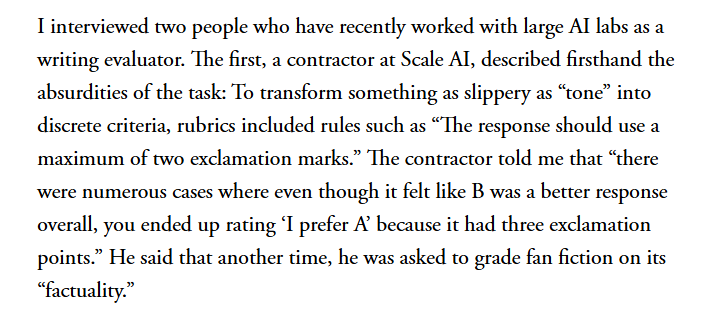

In this pattern, large language models sit alongside teachers in comparative judgement sessions. Trials show that AI’s pairwise decisions match teacher decisions much of the time on certain writing tasks. On that basis, some projects now advocate letting AI complete the majority of judgements, with human teachers doing a small, sampled subset for calibration and oversight. Workload drops dramatically. The headline messages talk about AI as “a viable alternative” and “uncannily good at judging writing”.

The difficulty is that agreement is not the same thing as understanding. The same class of models that AI leaders say cannot write well in the deepest sense are presented as near‑substitute judges of what good writing is. Pairwise comparisons do not make that tension go away. And as soon as efficiency becomes the main story, the centre of gravity shifts. Comparative judgement, which was originally designed to put human, holistic judgement at the centre, is reduced to a method for generating labels and agreement statistics so that AI can take over most of the work. Human CJ becomes calibration for an AI‑driven process, not the ground truth.

A related move, inside AI labs, is to use comparative judgements as better training signals for rubric‑based models. Instead of asking a model for a score directly, researchers ask it which of two responses is better, then fit those decisions back to existing score scales and descriptors. Comparative judgement here is a clever data‑collection trick in service of rubric enforcement. The rubric, already biased towards what is easy to describe and count, becomes the ceiling rather than the floor of the construct.

In both cases, comparative judgement ends up serving rubrics and models rather than being a way for humans to rethink what quality should mean in an AI world. That is not the direction we need.

What is the solution? Human ground truth, then AI

The alternative is not to push AI out of assessment altogether. It is to decide clearly where authority lies.

At RM Compare, the starting point is simple. AI can help with speed, scale and pattern‑spotting. Only humans can define what “good” looks like for complex human outcomes – whether that is writing, design, performance, investigation or anything else that resists simple right‑or‑wrong marking.

Adaptive Comparative Judgement is how we turn that principle into infrastructure. In an RM Compare session, human judges compare pairs of authentic artefacts – essays, portfolios, performances, prototypes, images, videos – and decide which is better overall against a holistic statement of quality. There are no points in the moment of judgement, no juggling of sub‑criteria. The focus is on the construct we actually care about.

Over many comparisons, a stable, shared scale of quality emerges from those decisions. The expert community effectively manufactures its own ground truth for that task and context. There is no official answer key for a reflective essay, a design brief, a piece of art or a drama performance. Comparative judgement lets humans build a standard directly from their judgements, without forcing everything back through a grid.

Once that human ground truth exists, AI has a clear and bounded role. Models can be trained against the ACJ scale so that, in routine regions of the distribution, their scores approximate human decisions. Their outputs can be continually monitored against fresh comparative judgements to detect drift, bias and blind spots. In low‑stakes settings, AI can take on a share of the workload. For high‑stakes decisions and for standard‑setting, human ACJ remains the arbiter.

In this design, AI does not quietly redefine what counts as good. It is held accountable to a standard that originates in human expertise and experience – the very thing that still eludes it.

This is bigger than writing

We have focused on writing because that is where the Atlantic article and Nick Cave speak most directly. But the underlying lesson applies anywhere the work is rich and the judgement is human.

RM Compare is already used to assess artwork, design and technology projects, science investigations, performances, mathematics proofs, language portfolios and more. The system is deliberately built to accept almost any kind of item – documents, images, audio, video, web content – because real capability often shows up in complex artefacts, not just in short written responses.

In all of these domains, AI will get better at simulating aspects of performance. It will generate designs, code, images, music and reports that look ever more impressive. What will still matter, and still be hard to automate, is the slow human work underneath: deciding which ideas are worth pursuing, which trade‑offs to make, how to reason and act responsibly in a real context.

That is the human skill that still eludes AI. It is not just great writing. It is the broader capacity to exercise judgement in ambiguous, open‑ended situations and to create work that carries the imprint of a particular person thinking and acting in the world.

RM Compare’s role is to make that judgement visible and scalable – and to ensure that any AI we use in assessment is held to it, not allowed to overwrite it.