- AI Validation

Who Gets to Define “Learning” in an AI World? (Part 1/2)

OpenAI’s new “Learning Outcomes Measurement Suite” is more than a product announcement; it is a bid to define how AI‑mediated learning will be measured – and, by implication, what will count as learning in the years ahead. Their recent education push wraps research, product and policy language into a single, compelling story about how students learn with AI.

For those of us who care deeply about assessment, that raises a simple but vital question: who actually gets to define learning in an AI world?

At RM Compare, we welcome the renewed focus on learning outcomes and evidence. If AI is going to sit at the heart of lessons, homework and study, then schools and systems deserve more than anecdotes about “engagement” and “creativity”. But there is also a risk. If we rely only on the telemetry and data points that AI systems can easily collect, something essential will be left out.

We call the missing piece a human‑grounded validity layer: independent judgements of complex work that sit outside any single vendor’s platform. RM Compare provides that layer through Adaptive Comparative Judgement (ACJ).

When Metrics Start to Define the Thing They Measure (Goodhart's Law)

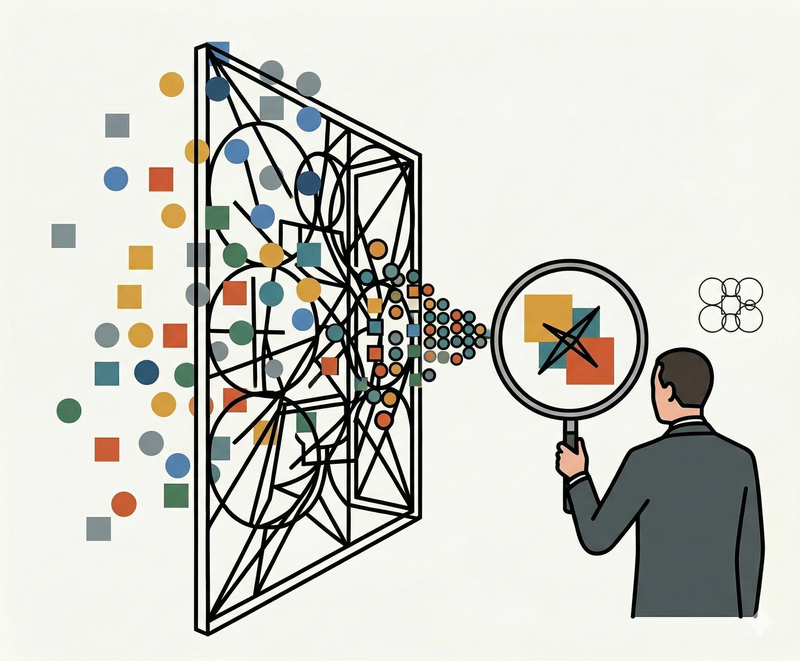

AI‑rich learning environments generate vast amounts of data. Every click, query, hint request and revision to a draft leaves a trace. Over time, these traces become tempting proxies for what we care about most.

The risk is not that such signals are useless; the risk is that they are too available. We start optimising for what we can see, rather than what we truly value.

Over time, “engagement” can quietly become whatever looks active in a log file, even if the student is simply cycling through hints. “Creativity” can drift toward whatever appears novel to a model, whether or not a human judge would see it as insightful, ethical or fit for purpose. “Metacognition” can narrow to the kinds of self‑explanations a system can parse, while more tacit forms of judgement and reflection slip through the net.

Once the metrics are in place, dashboards, pilots and policy papers begin to orbit around them. The conversation shifts from “What do we mean by deep learning in this subject?” to “How can we move these numbers?” The map, gradually and quietly, becomes the territory.

A Different Starting Point: Judgement Before Telemetry

Adaptive Comparative Judgement starts from the opposite direction.

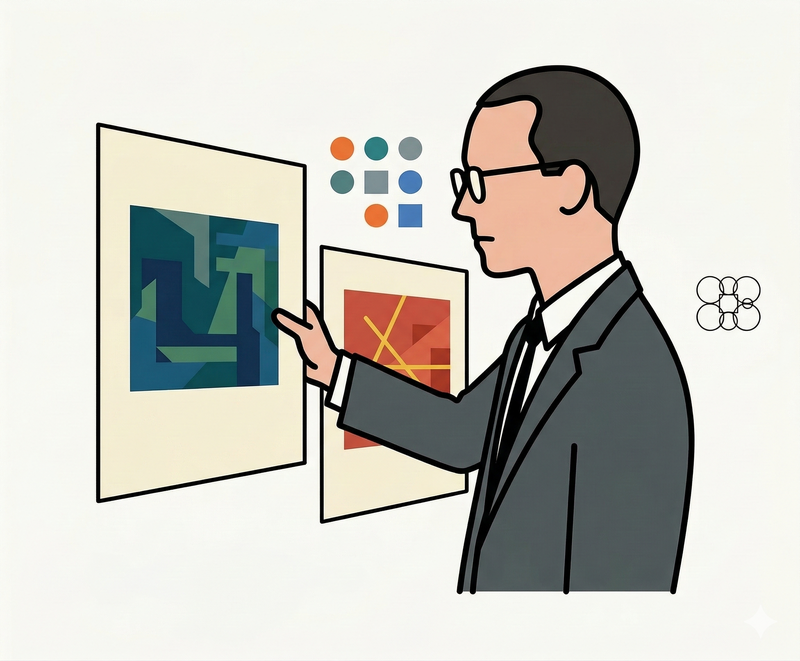

Instead of asking “What can we infer from the data exhaust of an AI system?”, ACJ begins with a much more direct question: when experienced judges look at real student work, what do they think is better?

In an ACJ session, pairs of artefacts – essays, design projects, lab reports, performances, portfolios – are presented to judges. For each pair, a judge decides which piece is stronger overall. No rubric with a dozen tick‑boxes. No demand that every nuance be squeezed into a numeric scale. Just informed, professional judgement applied repeatedly across many pairs.

From these apparently simple choices, powerful structure emerges. Because many judges each make many comparisons, individual quirks average out. A stable rank order of work appears, even when the outcomes are complex and open‑ended. More importantly, the process surfaces the tacit criteria that experts actually use: the coherence of an argument, the elegance of a solution, the authenticity of a performance, the maturity of a line of reasoning.

RM Compare exists to make this possible at scale. Adaptive algorithms ensure that judges spend their time on comparisons that are most informative. Panels can include teachers, external examiners, employers, even students. The result is not just a set of scores, but a living, shared sense of “what good looks like” in a given context.

Where AI‑native approaches say “let us infer learning from interaction data”, ACJ says “let us ask informed humans to tell us which work is better, and build our understanding from there”.

Why a Human‑Grounded Validity Layer Matters

This is not an argument against AI‑based measurement. On the contrary, richer data about how students interact with content, tools and peers can illuminate learning processes in ways we have never had before.

The problem arises when those internal metrics become the only story we tell about learning.

A human‑grounded validity layer acts as a check and balance. It sits outside any one platform, model or dashboard and asks: when we look at the work itself – stripped of logs and labels – does it really show the gains our metrics claim? Are we seeing more thoughtful essays, more robust designs, more convincing arguments, more original ideas?

By running ACJ on student work produced under different conditions – with and without certain AI supports, before and after adopting a new tool, across cohorts or institutions – we can test whether shifts in AI‑derived indicators correspond to shifts in what human judges recognise as quality. When they line up, our confidence in the metrics grows. When they diverge, we have discovered something important about the limits of our instruments.

In that sense, the validity layer does not compete with AI analytics; it grounds them.

Keeping the Profession in the Loop

There is also a question of professional agency.

When a small number of technology companies define the constructs, instruments and dashboards for “learning with AI”, educators risk becoming consumers of someone else’s theory of learning. They are invited to respond to analytics, but not necessarily to shape the standards behind them.

Comparative Judgement pushes in the opposite direction. It treats teachers and other experts as active co‑authors of the standard. Their repeated, situated choices gradually reveal – and refine – the community’s sense of quality. They can interrogate outliers, discuss exemplars, and adjust expectations as disciplines, technologies and social needs evolve.

Learners, too, can be brought into that conversation: seeing ranked exemplars, discussing why one piece might be judged stronger than another, and internalising a richer understanding of what counts as good work in their field.

In an AI world, that ongoing, human process of defining “better” is not an optional extra. It is the core of educational self‑determination.

What Counts as ‘Ground Truth’?

Even within OpenAI’s own framing, there is an acknowledgement that AI cannot define learning entirely on its own. In their diagrams, a small box at the bottom is reserved for “partner‑provided ground truth”: the evidence that schools, universities and systems bring to the table.

Right now, the examples given are familiar: exam scores, classroom observations, attendance. These have their place, but they are blunt tools. They tell us little about the fine‑grained quality of complex work, or the kinds of capabilities – creativity, collaboration, problem‑solving – that matter most in a changing world.

If AI is serious about learning outcomes, that ground‑truth box needs to be filled with something richer.

In Part 2 of this series, we will look directly at that question: why traditional measures are not enough, and how ACJ through RM Compare can offer a deeper, more ambitious form of ground truth for AI‑era education.