- Opinion

Workload, Grades and Transparency: Why ACJ Needs a Different Starting Point

When ACJ is judged by rubric‑first assumptions, it will always look like a poor imitation of traditional marking: more judgements, awkward grade mapping, fewer boxes ticked. If we instead start from its own epistemology - holistic, relative, expert judgement as the primary evidence of quality - then workload becomes a design and scheduling question, not an intrinsic flaw. Grade conversion becomes a standard‑setting exercise on a defensible scale, not an unsolved mystery. Transparency becomes an opportunity to move beyond pseudo‑precision towards exemplars, boundary scripts and richer feedback practices. In other words, ACJ is not a bolt‑on replacement for rubrics; it is a different way of knowing and communicating what quality looks like. Addressing workload, grades and transparency effectively means taking that difference seriously from the start.

| Area | Do | Do not |

|---|---|---|

| Workload | Design tasks for whole‑piece, holistic comparison; spread judging across a panel; plan to a reliability target, not a fixed number of rounds. | Bolt ACJ onto long, multi‑part exams with tiny judge panels and tight legacy marking windows. |

| Grade conversion | Use boundary scripts and exemplars to anchor cut‑scores on the ACJ scale; treat grade setting as a transparent policy step after ranking. | Expect ACJ to reproduce existing rubric score distributions automatically or to “output grades” without standard setting. |

| Transparency | Share ranked exemplars at boundaries, explain how the process works, and separate grading from feedback with clear commentary or crits. | Rely on hidden algorithms with no exemplars, or assume a rubric grid is automatically more transparent to students. |

Workload as a design problem

From a distance, ACJ looks expensive: instead of one marker working through a pile of scripts with a rubric, you see many judges making many pairwise decisions. The headline is “more clicks,” so the assumption is “more work.” Up close, the picture is different. The crucial question is not how many judgements are made in total, but what kind of work each judgement replaces in the real world of marking.

Take a design course. Under a traditional system, a tutor might spend ten or fifteen minutes on each project, ticking through criteria for creativity, functionality, technical competence, user focus and “aesthetic impact.” They then have to reconcile all of that into a single mark that feels fair. Most of the effort goes into wrestling a complex, holistic impression into a narrow grid. In an ACJ session using the same projects, the same tutor can look at two portfolios side by side and decide, in under a minute, which has stronger overall impact, coherence and craft. The judgement is still expert and demanding, but it is focused on a single decision rather than on filling in a table. Check out our Cain College Case Study.

At scale, that difference matters. If tasks are designed so that a whole‑piece comparison really is meaningful, each ACJ judgement stands in for several separate rubric decisions. Workload becomes a function of task design, panel size and scheduling, not an intrinsic property of the method. When ACJ pilots feel overwhelming, it is often because they are layered on top of existing marking loads, or because a tiny group of examiners is asked to compare long, multi‑part scripts under acute time pressure. That is an implementation choice, not an inevitable consequence of ACJ.

Grades as policy, not mystery

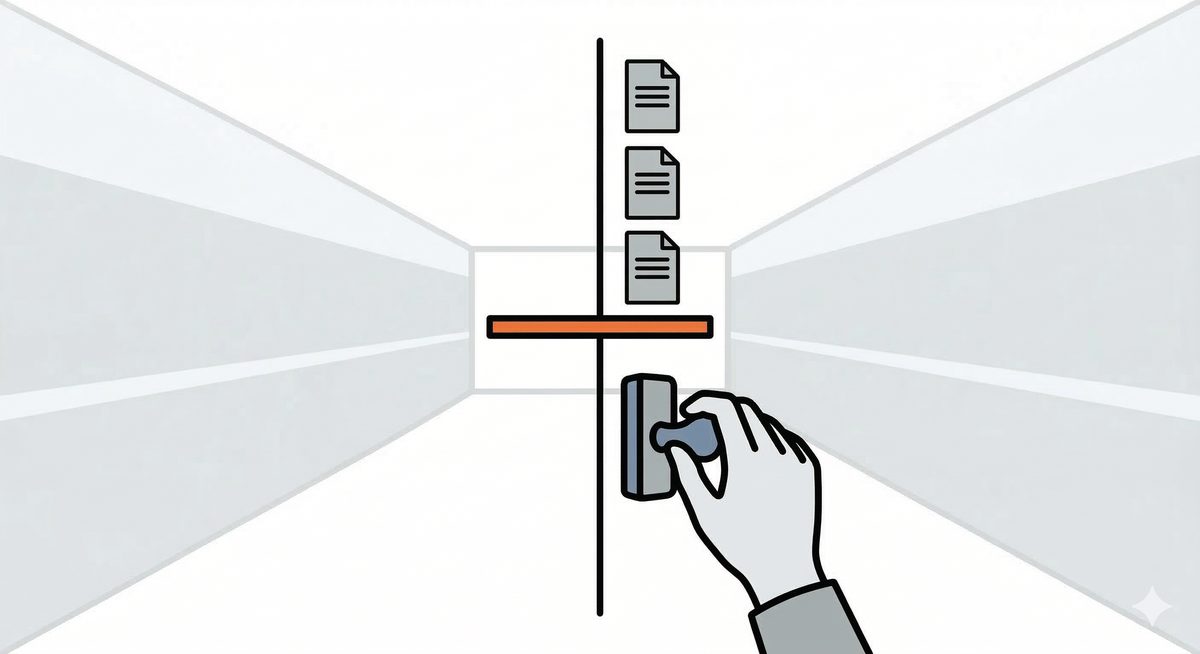

Rubrics tempt us to believe that grades are hiding inside the mark scheme, waiting to be uncovered. Add up enough points across enough criteria and you feel you have discovered a truth about a script. ACJ makes us be more honest. It gives you something else: a well‑ordered scale of performance built from many expert comparisons, but it does not pretend that the letters you eventually attach - A, B, C, 1–9 - are anything other than decisions.

Experienced examiners often talk about “borderline pass” answers and “clear first‑class” scripts in ways that go far beyond any checklist: they notice how a candidate frames issues, handles ambiguity, integrates authorities and exercises judgement. In an ACJ process, those impressions are captured directly. Examiners compare whole scripts, build up a stable rank order, and only then sit down with scripts from different points on the scale to decide which ones embody the minimum acceptable pass, which represent a typical merit, which clearly belong in the top band. Those chosen scripts become concrete boundary exemplars. Cut‑scores are then placed on the ACJ scale at the points where those exemplars sit.

The underlying politics of where to set the bar have not vanished; they are simply made visible. The mapping from scale to grades can be documented, reviewed and adjusted over time in exactly the same way as cut‑scores on test marks. The difference is that the ordering beneath those decisions is driven by consistent professional judgement across many comparisons, rather than by noisy totals from several loosely correlated rubric boxes. Grade conversion stops being an unsolved mystery and becomes a standard‑setting exercise on top of a scale that is built for that purpose.

Take a look at out recent blog posy about GCSE grade reliability.

Transparency beyond pseudo‑precision

The final worry is transparency. Students (and sometimes regulators) are reassured by numbers against criteria: 16/20 for analysis, 7/10 for structure, 4/5 for use of authorities. It looks clear and precise. But ask a student what they actually learned from those numbers, and the picture is murkier. A design student may see “3/5 for aesthetics” without any real sense of why their work feels weaker than a peer’s. A law student may see ticks for issue‑spotting and citation yet still not understand why their argument leaves readers unconvinced. The transparency is mostly cosmetic.

ACJ invites a different approach. In a programme using comparative judgement formatively, for instance, students might upload answers to a problem question and then participate as judges in comparing anonymised scripts. They see genuine variation in how peers argue, structure and justify their positions. They watch their own script rise or fall in the ordering as more judgements come in. Later, when the course team identifies boundary scripts and publishes anonymised examples with commentary—“this script sits just at the pass boundary because…”, “this one exemplifies our top band because…” - students can see real work at different levels, grounded in the way their expert community actually reads and values arguments.

Instead of hiding aesthetic judgements for example behind a single number on a rubric, tutors can show a spread of ranked work: early, tentative ideas at one end; technically competent but dull solutions in the middle; genuinely compelling, well‑resolved projects at the top. Because that ordering comes from dozens or hundreds of comparisons, staff can say with confidence “this is what we, as a community, recognise as stronger design.” Feedback can then be layered on through crits, annotations and conversations, using the ACJ ordering as a backbone rather than as a black box.

On this view, transparency is less about reverse‑engineering a grade from checkboxes and more about opening up the standards themselves: showing exemplars at boundaries, explaining the reasoning around those boundaries, and situating each student’s work within that landscape. ACJ does not stop you giving feedback; it pushes you to separate the act of establishing trustworthy ordering from the equally important act of explaining and coaching.

Check out our thoughts on an Appeal Process with ACJ.

How RM Compare builds this in by design

These ideas are not abstract for us; they are baked into how RM Compare works.

On workload, RM Compare uses adaptive pairing and chaining so that every judgement does as much work as possible. The system quickly moves judges away from random pairings and towards comparisons that are most informative for the emerging scale, while chaining keeps one familiar script on screen so judges can decide faster without losing rigour. In large‑scale projects we see this translate into fewer judgements per script for a given reliability and into judging that can be spread flexibly across a wider panel and a longer window, rather than compressed into a single intense marking period.

On grade conversion, RM Compare always produces both an ordered list and an underlying score scale. Awarding bodies then use that scale as the backbone for setting grade boundaries: they pull out scripts at different points, agree which should define key thresholds, and lock in cut‑scores around those exemplars. That is how projects like ISEB’s iPQ have used RM Compare to replace traditional marking while still meeting the demands of high‑stakes grading and regulatory scrutiny. The point is not that RM Compare “outputs grades”, but that it provides a defensible measurement layer on which grade‑setting decisions can rest.

On transparency, RM Compare captures every judgement and makes it available for analysis and conversation. Educators can see how many times each script was judged, how consistent those judgements were, and which pieces of work sit at important boundaries. Many schools and universities then go further and use that ranked work for feedback: sharing anonymised exemplars at different levels, running “learning by evaluating” activities where students act as judges, and using the collective ordering as the backbone for discussions about standards. In practice, that often gives teachers and students more to talk about, and more concrete examples to point to, than a traditional rubric ever did.

Conclusion

When we judge ACJ from within a rubric mindset, it will always feel awkward and incomplete. When we let its own logic lead - trusting holistic, comparative expert judgement as the primary evidence of quality - those familiar objections about workload, grades and transparency start to look more like design questions than deal‑breakers. Thoughtful task design, explicit standard setting and richer use of exemplars and audit trails are all within our control, and platforms like RM Compare exist precisely to make those choices practical at scale. The real challenge is not whether ACJ can cope with the “big three” concerns, but whether we are willing to rethink what good assessment looks like when we stop pretending that grids and numbers are the only route to fairness.