- Opinion

What Might an Appeals Process Look Like with RM Compare?

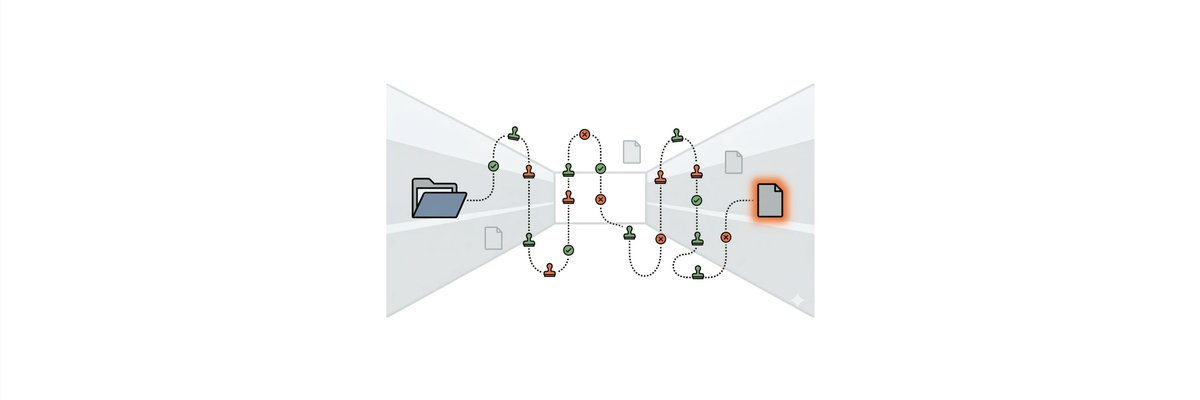

As more awarding bodies explore Adaptive Comparative Judgement (ACJ) for high‑stakes assessment, a question comes up very quickly: what happens when a candidate appeals? In a world of scripts and marks, the answer feels familiar: you re‑check the marking, maybe re‑mark the script, and see if the grade should change. With ACJ, and especially with a platform like RM Compare, the evidence looks different, but the principles do not.

This post sketches what a fair, defensible appeals process can look like when RM Compare sits at the heart of grading. The short version is that appeals do not disappear; they become more transparent and more evidence‑rich, because every judgement and every decision point is logged.

From “re‑mark the script” to “interrogate the process”

| Traditional | RM Compare / ACJ |

|---|---|

| Was the marking procedurally correct? | Did this candidate’s work pass through the intended ACJ process correctly (right session, right cohort, enough judgements, no technical issues)? |

| Was there a clerical or processing error? | Has their scale score been mapped correctly to the agreed grade boundaries? |

| Is there evidence that this script was marked unfairly or inconsistently? | Is there anything in the pattern of judgements that suggests their position on the scale might not fairly reflect the collective view? |

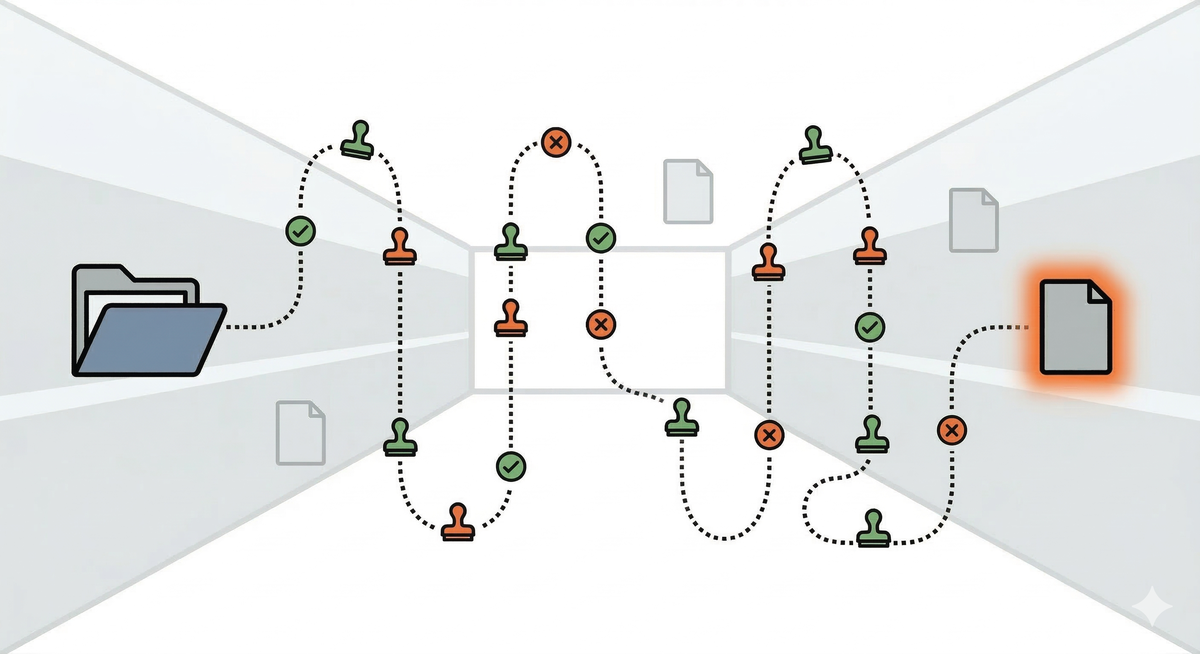

Stage 1: Administrative and process checks

An appeals process built around RM Compare should start, as now, with a light‑touch review of the result.

At this stage, the awarding body confirms that the candidate’s work was assigned to the correct session and cohort, that it was included in the ACJ run, and that there were no technical failures (for example, a corrupted upload or a script that never became available to judges). It then checks the judgement count for that script: did it reach the expected minimum for this assessment, in line with the design of the session?

Next comes the mapping from scale to grade. RM Compare outputs both an ordered list of work and an underlying score for each script. The appeals team verifies that the candidate’s score has been mapped to the right grade boundary for that session and series, using the cut‑scores that were agreed at awarding. This is analogous to a clerical check in a traditional system, but uses the ACJ scale instead of raw marks.

Most appeals can be resolved at this stage. If a candidate has been placed in the wrong cohort or a transcription error has occurred in transferring grades to certificates, the fix is straightforward. If no such issues are found, but concerns remain, the appeal can progress to a deeper review.

Candidates info example - Grading and Appealing

Helping candidates to understand

Stage 2: Evidence‑based review of the judgements

The second stage looks more closely at the judgements that underpin the candidate’s position on the scale. Here, the key advantage of RM Compare is that it stores a complete log of the assessment process.

An appeals team can see which other scripts the candidate’s work was compared with, which way those decisions went, and how those other scripts sit on the final scale. This makes it possible to reconstruct the “local neighbourhood” of the candidate’s script: the few pieces of work that ended up just above and just below it. Senior examiners can then review this small cluster, together with the agreed boundary scripts, to sense‑check whether the candidate’s relative position looks plausible.

The team can also inspect judge behaviour for the comparisons involving this candidate. If the data show that a particular judge was highly inconsistent across the session, or took unusually long on many decisions, that judge’s influence can be scrutinised. In some cases, if a judge is identified as a clear outlier for the session as a whole, their judgements may already have been down‑weighted or removed at the awarding stage; the appeals team can verify that this has been applied consistently.

This process is not a “re‑mark” in the traditional sense. The aim is not to have a new person sit down and grade the script in isolation, because the ACJ grade arises from many comparisons, not one reading. Instead, the review asks whether anything in the process or the local pattern of judgements provides reasonable grounds to doubt the fairness of the result.

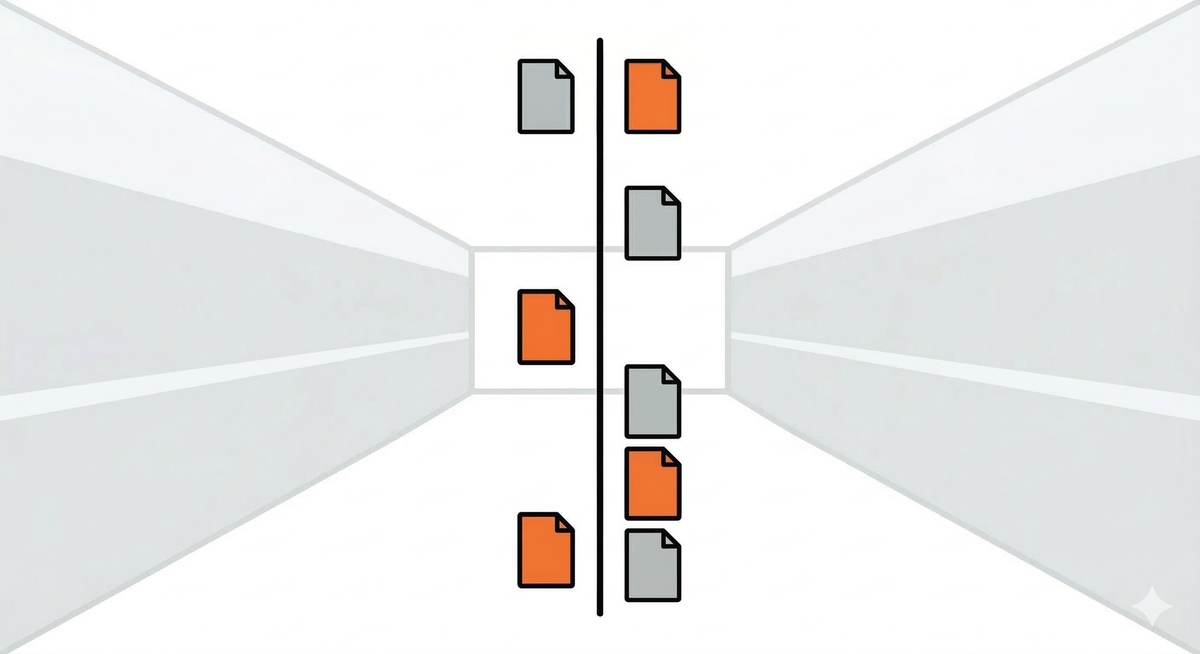

Possible outcomes of an appeal

A well‑designed ACJ appeals process will lead to familiar kinds of outcomes, even though the route is different.

In many cases, the outcome will be that the grade stands. The checks show that the candidate’s work was correctly included in the right session, received the intended number of judgements, and was mapped correctly to the published boundaries. The local review confirms that the script sits sensibly among its neighbours and boundary exemplars.

In very rare cases, an appeal will uncover an administrative or data error: a script mis‑assigned to the wrong cohort, a mis‑entered candidate ID, an incorrect application of cut‑scores. These can be corrected, and the resulting grade change documented, just as they would be in a marks‑based system.

Even more rarely, the evidence may point to a process problem. For example, the candidate’s script might have received significantly fewer judgements than intended due to a configuration error, or the small cluster of judges who saw this script might include a misfitting judge whose decisions were not handled appropriately at the time. In these cases, the awarding body may decide to re‑run part of the session, or to schedule targeted re‑judging for a subset of scripts, before re‑scaling and re‑applying boundaries. Any resulting grade change is then handled through the normal governance channels.

The important point is that appeals remain exceptional and are handled within clear timelines and rules. What changes with RM Compare is not the existence of appeals, but the quality and granularity of the evidence available when they occur.

How RM Compare supports a defensible appeals framework

The features that make RM Compare attractive for initial grading are the same ones that make appeals more robust.

Because every judgement is logged with judge identity, timestamps and outcomes, awarding bodies have a full audit trail of how each candidate’s result came to be. Because the platform surfaces reliability statistics and misfit measures at session level, quality issues can be picked up early, reducing the likelihood that they surface only at appeal. And because boundary scripts and exemplars can be identified and exported, senior examiners can ground their decisions in real work rather than in abstract parameters.

From a regulator’s perspective, this means the core questions - did you follow your procedures, and is there evidence this candidate has been treated unfairly - can be answered with more than just a marked script and a total. There is a traceable chain from the candidate’s work, through a network of expert comparisons, to a scale position and a grade, and that chain can be examined when needed.

Reassuring centres and candidates

For centres and candidates, the key message is that moving to ACJ and RM Compare does not remove their right to challenge a result. An appeal may not look like a re‑mark in the old sense, but it still offers a structured way to ask “has something gone wrong here?”, and to get an answer grounded in evidence.

Awarding bodies adopting RM Compare should be explicit about this. They can publish clear descriptions of their ACJ‑based appeals routes, explain the kinds of checks that happen at each stage, and share anonymised examples of how appeals have been resolved. Doing so helps to build trust: not only in the new method, but in the idea that better data and richer audit trails can make the system fairer, not more opaque.

A candidate appeal, step by step

To make this less abstract, imagine a candidate, Amal, who has taken a high‑stakes extended project assessed using ACJ in RM Compare. When results are released, Amal’s grade is one band lower than her teachers expected, and the centre submits an appeal.

At Stage 1, the awarding body’s team checks the basics. They confirm that Amal’s project was correctly uploaded into the right RM Compare session and assigned to the correct cohort. They see that her work received the planned number of judgements for this assessment and that there were no system flags for missing data or technical errors. They then look at the ACJ scale and verify that her score falls just below the published cut‑score for the higher grade. On the face of it, nothing appears to have gone wrong.

Because the centre still has concerns, the appeal proceeds to Stage 2. Now the team drills into the underlying judgements. RM Compare shows every comparison involving Amal’s project: which other projects it was paired with, which way those decisions went, and where those other projects sit on the final scale. A clear picture emerges. Amal’s work was consistently judged weaker than a small cluster of projects above it, and consistently stronger than those just below. The system also shows the judges involved in those decisions. None of them has been flagged as a misfit in the session‑level analysis; their decisions, including those involving Amal’s work, are in line with their patterns elsewhere.

To sense‑check this, a senior examiner pulls out Amal’s project, the three projects immediately above her on the scale, the three below, and the agreed boundary exemplars for the two relevant grade bands. Reviewing this small set side by side, the examiner concludes that Amal’s work genuinely sits where the data say it does: stronger than some, weaker than others, and just on the lower side of the boundary. The appeal is not upheld, and the centre receives a clear explanation of the checks that were carried out and why the grade stands.

In a different case, the story might end differently. If the review had revealed that Amal’s project received unusually few judgements, or that it was almost exclusively seen by a judge later identified as an outlier, the awarding body could decide to re‑run a subset of judgements before re‑scaling and, if necessary, changing the grade. The point is not that every appeal leads to a change, but that every appeal can be answered with a detailed, reconstructable account of how the result was reached.

Why appeals are rarer – and less likely to overturn grades

One of the quieter advantages of ACJ with RM Compare is that it tends to reduce the need for appeals in the first place. Because every script is effectively “moderated” multiple times through many independent judgements, the risk that a single harsh or generous marker drives a result is much lower than in a traditional one‑marker system. Reliability is monitored at session level, misfitting judges can be identified and handled, and issues are more likely to be caught during awarding rather than after results go out.

When appeals do arise, they are also less likely to overturn grades, not because the bar is higher, but because the original decisions rest on a deeper evidence base. A grade built from dozens of consistent comparisons, anchored to agreed boundary scripts, will usually prove robust under scrutiny. The appeals process described above is there to catch the rare cases where something has gone wrong in configuration, data handling or judging, but in a well‑run RM Compare session those should be exceptions rather than the norm. In that sense, ACJ does not just survive an appeals regime; it helps create the conditions where appeals are genuinely about rare errors, not about patching the weaknesses of everyday marking.

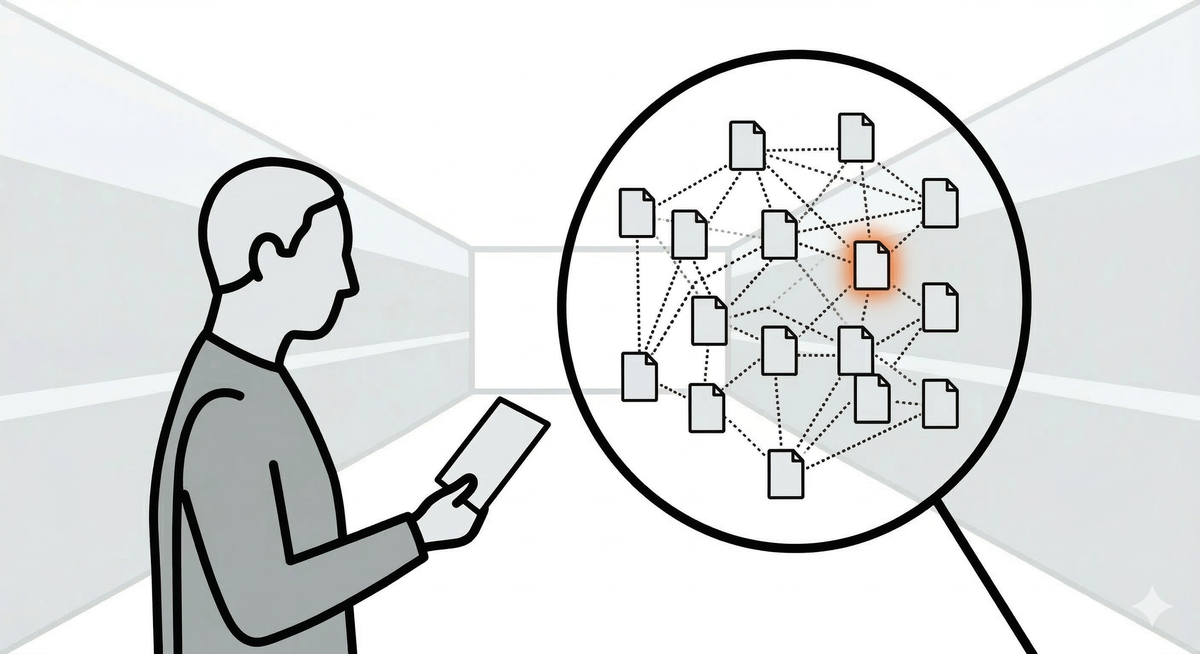

Talking about appeals with teachers and candidates

If ACJ and RM Compare feel mysterious, appeals will feel risky. The best defence is a simple, honest explanation of what happens if someone is unhappy with a result.

For teachers, that might mean a short briefing that says: “Your students’ grades come from many expert comparisons, not from one person marking alone. If you appeal, we don’t ‘re‑mark’ in the old way; we check whether their work went through the process correctly, whether it has enough judgements, whether the scale‑to‑grade mapping is correct, and how it sits among its neighbours and boundary examples. We can then explain what we found.” The aim is to replace the idea of a hidden algorithm with the picture of a traceable chain of professional judgements.

For candidates, the message needs to be simpler still. Centres can tell them: “Your work was seen multiple times and compared with other students’ work. Your grade reflects where it sits in that ordering. If you still think something is wrong, there is an appeals route where the exam board checks that your work was included properly and that the rules were applied fairly. They can’t promise a different grade, but they can promise a proper check of the process.” A one‑page infographic showing “from your script to your grade” – upload, multiple comparisons, scale, boundaries, possible appeal – can go a long way to making the system feel understandable rather than opaque.