- Opinion

Why AI Demands a New Architecture for Assessment (4 Part Series)

For more than 200 years, formal assessment has been organised around a simple industrial idea: break performance into parts, assign marks, aggregate them into grades, and trust the resulting number as a reliable signal of quality. That model emerged in the same historical moment as steam power, factories and other systems designed to make the world legible, standardised and controllable. It made sense in an age that increasingly believed complex human activity could be reduced to manageable parts and processed at scale.

But the conditions that gave rise to marking are beginning to change. Generative and agentic AI are exposing the limits of assessment systems built for a more predictable, more mechanical world. In response, much of the assessment sector is treating AI as a way to mark faster, moderate more cheaply, and preserve the familiar exam–mark–grade machinery. History suggests that this is what incumbents usually do in the early stages of disruption: they attach the new power source to the old vehicle and hope the broader architecture can remain intact.

This series argues that assessment is at the start of a second major disruption. The first, during the Industrial Revolution, pulled assessment away from disputation, ranking and holistic judgement and into the world of scripts, marks and grades. The second, driven by AI, is pushing in the opposite direction: away from assessment as industrial measurement and toward assessment as complex, ongoing judgement supported by technology rather than replaced by it.

The first disruption

Before numerical marking became normal, important assessments often depended on collective human judgement. At Cambridge, examiners reviewed candidates’ work and produced rank orders rather than assigning fine-grained numerical marks, a practice that treated quality as relational and comparative rather than atomised into points. This older tradition sat comfortably with forms of disputation and expert judgement in which the task was not to total up micro-decisions, but to decide who had demonstrated stronger performance in the round.

That changed in the late eighteenth and early nineteenth centuries. William Farish is widely associated with the introduction of quantitative grading at Cambridge around 1792, a move often described as a response to the practical need to process growing numbers of students more efficiently. In effect, assessment adopted the same logic that was reshaping industry: standardise the task, inspect the output, and generate a result that can be stored, compared and scaled.

Seen in that light, marking was not a timeless truth about how learning should be evaluated. It was a technology of an industrial age. Its strengths were the strengths industrial society prized most highly: efficiency, comparability, administrative simplicity and the appearance of objectivity. It helped schools, universities and awarding bodies cope with larger populations and more formal systems of selection.

The faster horse problem

The current AI wave is producing a familiar response. Regulators and providers are largely framing AI as something to be inserted into existing marking processes, especially for support tasks such as marker training, quality assurance and lower-stakes scoring. Industry commentary likewise emphasises efficiency, scalability and consistency, suggesting that the dominant ambition is not to move beyond marking but to make the marking machine run better.

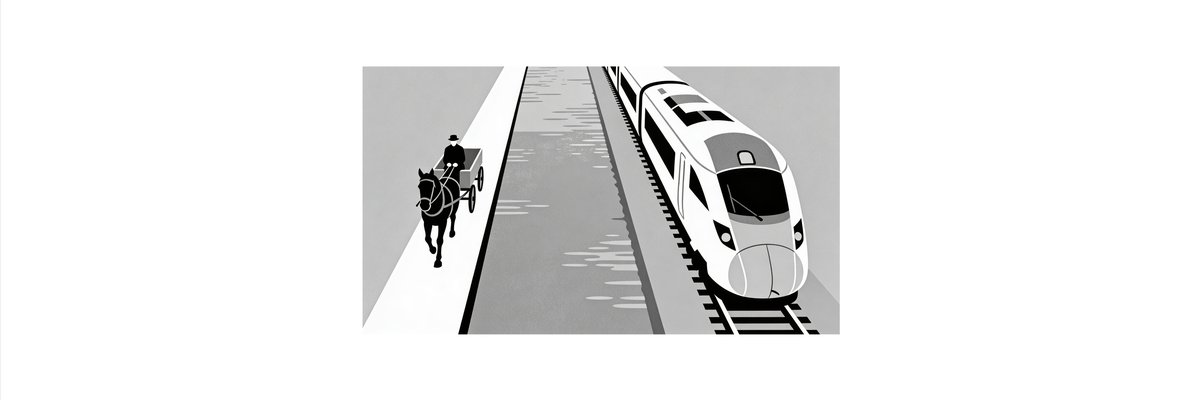

That is why the image of the “faster horse” matters. When a new technology arrives, incumbents rarely begin by redesigning the whole system around it. In transport, steam power was first used within older arrangements of canals and haulage before railways revealed that steam really wanted a different infrastructure. In the automotive world, autonomous driving is still often framed as a feature of the existing car model rather than a catalyst for rethinking mobility as a service. In assessment, AI is currently being asked to do the old job faster: generate items, support marking, detect anomalies and preserve the credibility of marks and grades.

This response is understandable. Existing systems have regulation, reputation, psychometric machinery and operational habits built around marks. But it also risks misreading the moment. If the environment has changed from a complicated world to a genuinely complex one, then making the old system more efficient may delay the transition while doing little to solve the deeper mismatch.

From complicated to complex

A complicated system can be decomposed, analysed and reassembled. A complex system is adaptive, path-dependent and full of interactions that change the conditions under which judgement is made. Traditional marking works best when it can assume stable constructs, bounded tasks, and a world in which performance can be sampled once and scored against a fixed scheme. That assumption looks increasingly fragile in an age where learners can work with AI, where capability is distributed across tools and contexts, and where valuable performance often unfolds over time rather than in a single sitting.

This is why AI may prove to be more revolutionary than evolutionary for assessment. It is not only making cheating or authorship harder to police. It is also forcing a deeper question: what counts as evidence of judgement, capability and growth when intelligent tools are part of the normal environment? If the answer is still “a script marked against a scheme,” assessment may cling to industrial habits long after the world those habits were built for has passed.

Why human judgement returns

If industrial assessment replaced holistic judgement with marking, the AI era may require the reverse move. Comparative judgement and related approaches rest on a simple but powerful insight: experts are often more reliable at deciding which of two performances is better than at assigning a precise mark to one performance in isolation. Modern adaptive comparative judgement takes that older human capacity and scales it through technology, producing robust rank orders and scaled outcomes from many pairwise decisions.

This matters because it points beyond the industrial model rather than merely refining it. Human judgement, properly structured, can deal with ambiguity, quality in the round, and performances that do not fit neatly into pre-specified boxes. In a world shaped by AI, that kind of judgement may become more, not less, important. The challenge is not to remove humans from assessment, but to build systems in which human and AI capabilities can work together without collapsing everything back into the old language of marks.

What this series will explore

The posts that follow will develop this argument in four steps.

- Post 1: how the Industrial Revolution created the conditions for numerical marking and why assessment became one more part of the clockwork world.

- Post 2: why today’s assessment sector is trying to build “faster horses” with AI, and what history suggests happens to systems that confuse disruption with mere optimisation.

- Post 3: how comparative judgement and disputation recover older traditions of expert human judgement and make them newly relevant in the age of AI.

- Post 4: what a post-industrial architecture for assessment might look like if marks become one projection of richer, ongoing, multi-source judgement rather than the centre of the system.

The core claim is simple. Assessment was not always built around marks, and it does not have to remain that way. The industrial age made marking look inevitable. The AI age is beginning to show that it was historical, contingent and possibly temporary.