- Opinion

From Disputation to Marks: How the Industrial Revolution Rewired Assessment (Part 1 / 4)

For more than 200 years, formal assessment has been organised around a deceptively simple idea: break performance into parts, assign marks, add them up, and trust the resulting number. Written exams and numerical grading feel so natural that it is hard to imagine assessment working any other way.

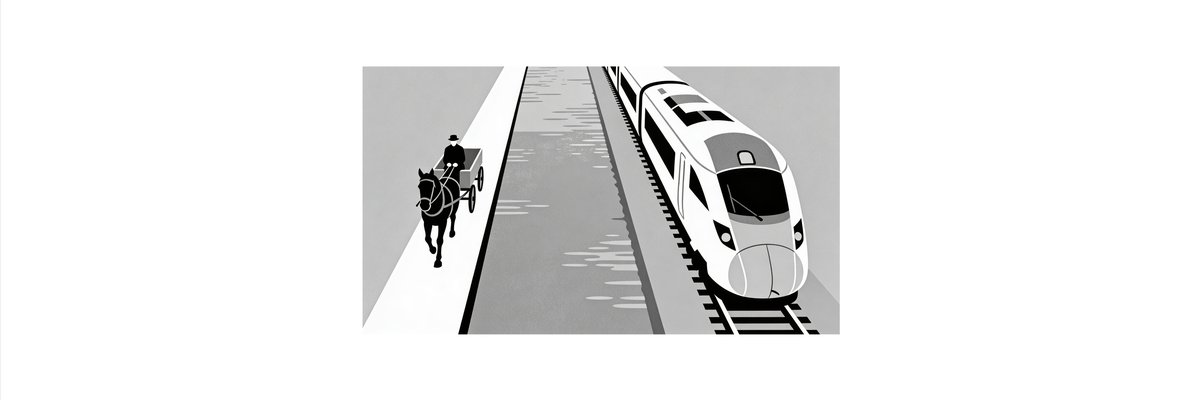

But it hasn’t always been like this. The system of scripts, marks and grades that dominates today is a historical invention. It emerged alongside the Industrial Revolution and carries the same worldview: the belief that messy reality can be decomposed into standard parts, measured precisely, and assembled into a reliable whole.

If that is true, it matters. Because the world assessment now has to describe – a world of complex skills, networked learning and powerful AI – looks less like a Swiss clock and more like a living ecosystem. A system built for the age of steam may not be the right one for the age of AI.

Before marks: assessment as judgement

Go back to late eighteenth‑century Cambridge and assessment looks strikingly different from today. Examiners did not sit with detailed mark schemes, counting points for each element of a model answer. Instead, they read candidates’ work, compared performances, and produced rank orders.

The question was not “Is this a 63 or a 67?”. It was “Who has performed better overall?”. Quality was treated as relational and holistic, not as a sum of atomised scores. Judgement lived in conversation and comparison, not in a spreadsheet.

This approach resonated with older academic traditions of disputation. In disputation, candidates defend ideas in dialogue, and expertise is expressed in how well they argue, respond, and connect across a body of knowledge. Assessment here is about making sense of complex performances in context. It depends on shared standards, professional judgement and the willingness to argue about what counts as better.

That kind of assessment does not scale easily. It demands time, attention and direct engagement with student work. It is also difficult to translate directly into simple, comparable records that can be used for large‑scale selection and administration. Which is where the Industrial Revolution comes in.

The industrial turn: when marks arrived

The late eighteenth and early nineteenth centuries saw huge changes in how society organised work and value. Steam engines, mechanised textiles, ironworks and railways created production systems with new priorities: throughput, standardisation, repeatability, control.

Industrial processes needed parts that were interchangeable and outputs that could be inspected quickly: up to standard, or not. That logic did not stay inside factories. It became a way of thinking about all kinds of complex activity.

Assessment was not immune. William Farish, a Cambridge scholar active around 1792, is often credited with formalising numerical grading for student work. Instead of relying only on qualitative impressions and rank orders, he introduced the idea that each element of a student’s performance could earn a set number of marks, which could then be summed to give a total.

This was a genuine innovation. It promised several things at once:

- Examiners could mark more scripts more quickly, because they were checking parts against a scheme rather than re‑evaluating whole performances each time.

- Results could be stored and compared across candidates and years, supporting more systematic selection and progression.

- The process looked more transparent and defensible: marks appeared to be grounded in clear criteria rather than in the personal authority of individual examiners.

In short, assessment adopted the factory mindset. Student work became, in effect, a manufactured product. Questions were components; marks were inspection results; grades were the label attached at the end of the line.

It was an elegant solution to the problems of a growing, industrialising education system. It made mass schooling and mass examinations administratively manageable. It aligned perfectly with a world that was increasingly confident that complex phenomena could be tamed if only they were broken into the right pieces and measured in the right way.

What was gained – and what was lost

The industrial turn brought real benefits.

- It enabled scalability. Large cohorts could be examined and graded in ways that felt broadly consistent.

- It supported centralised systems. Universities, employers and later the state could use grades as a common currency for selection and allocation.

- It seemed to offer objectivity. A mark scheme looked more neutral and less personal than an examiner’s holistic judgement.

Those gains explain why marking became the dominant technology of assessment and why it has proved so resilient.

But something important was lost in the process.

When performance is decomposed into parts, it becomes harder to see and reward qualities that only appear in the whole: coherence, voice, originality, judgement. When every decision must be mapped to a mark scheme, disagreement between experts – which is often where the most interesting learning happens – is treated mainly as error to be eliminated, not as a source of insight.

Perhaps most significantly, the industrial model encourages a particular illusion: that if we can specify every criterion and allocate every mark, we have captured the essence of quality. The map starts to look like the territory.

That illusion is easier to maintain in a relatively stable, slow‑changing world. It becomes harder when the environment shifts.

Why this history matters now

Today, a new wave of disruption is under way. Generative and agentic AI systems can write essays, solve problems, simulate conversations and participate in complex tasks. Learners can work with AI as collaborators, coaches and tools. Valuable capabilities often show up not in one‑off performances but in how people learn, adapt and make use of these systems over time.

This is not the kind of world the industrial marking machine was designed for.

The early reaction has been predictable. Much of the assessment sector is trying to contain AI by using it to shore up the existing regime: as a way to mark more efficiently, generate more items, detect anomalies or support human markers. AI is being treated as a new power source for the old engine.

In the next post, this will be described as the “faster horse” problem: the tendency of incumbents to use new technologies to do the old job a bit better, instead of asking whether the job itself has changed.

The history of the first disruption – from disputation and ranking to exam marking – gives two important lessons for the second.

First, it shows that assessment can change profoundly when the surrounding world changes. Marking is not an eternal necessity. It was an adaptation to the needs and beliefs of an industrial age.

Second, it suggests that when the underlying logic of production and knowledge shifts, systems that cling too tightly to their old technologies risk being left behind. The Industrial Revolution did not mean better handlooms; it meant factories. The AI revolution may not mean better marking; it may require a different architecture of judgement altogether.

Where this series goes next

This post has told a deliberately simple story: there was a time before marks; the Industrial Revolution made marking central; and that move shaped assessment in ways that are easy to forget.

The rest of the series will build from here:

- The next post will look at how today’s assessment systems are using AI to build “faster horses”, and why that strategy has limits.

- A third post will explore comparative judgement and disputation as ways of rediscovering richer human judgement in an AI age.

- A final post will sketch what a post‑industrial assessment architecture might look like if marks and grades become just one projection of a richer, ongoing conversation about quality.

The goal is not to romanticise the past or to abandon structure. It is to recognise that the tools inherited from the Industrial Revolution may no longer be enough – and that new technologies, used differently, can help assessment catch up with the complexity of the world it is supposed to describe.