Blog

Posts for category: AI & ML

-

Why Evaluative Judgement is essential in the age of AI: Looking forward with RM Compare

As artificial intelligence (AI) and large language models (LLMs) revolutionise learning and work, evaluative judgement—the capability to decide what “good” really means—has never been more important. RM Compare is at the heart of this movement, guiding educators, students, assessors, and designers to thrive in an AI-rich future.

-

Comparative Judgement: Elevating Human Input in the Age of AI

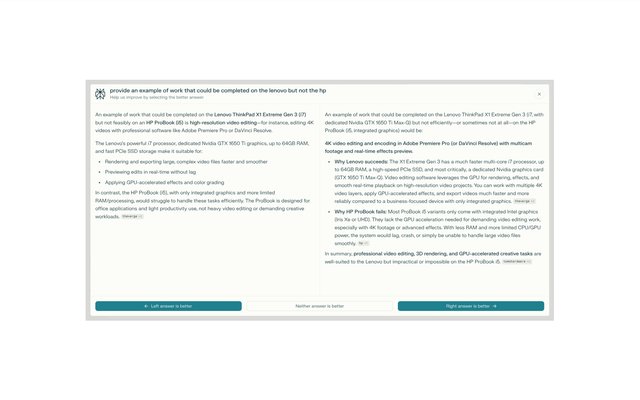

If you are user of an LLM you may have seen something similar to this image. Looks familiar right? This image is taken from Perplexity, but the problem they are trying is a universal one - and it's not going away any time soon!

-

Beyond Formula: The New Frontier for Assessment in an AI World

As AI-powered marking becomes more common in education, we must confront a critical risk: the “washback” effect, where the method of assessment shapes how and what students learn. Automated essay scoring powered by AI often rewards essays that adhere closely to expected templates—clear structure, formulaic vocabulary, and standard organization—regardless of whether content is genuinely insightful or original.

-

Finding the Right Fit: How RM Compare is Transforming Candidate Selection for Work, University, and Schools in the age of AI

Across industries and institutions, those responsible for recruitment and admissions are wrestling with a perfect storm: soaring application numbers, the rapid rise of AI-generated content, and the ever-present need for fairness and authenticity. The result? Recruiters and admissions teams are overwhelmed, and the risk of missing out on genuine talent has never been higher.

-

What the Recent Apple Study Taught Us About AI, Reasoning, and Assessment

In June 2025, Apple published a landmark study that has sent shockwaves through the AI and assessment communities. The research, titled The Illusion of Thinking, rigorously tested the reasoning abilities of the most advanced AI models—so-called large reasoning models (LRMs) from OpenAI, Google, Anthropic, and others—using a series of classic logic puzzles designed to scale in complexity. The findings have profound implications for how we understand AI’s capabilities, especially in the context of educational assessment.

-

RM Compare and AI

We get asked a lot of questions about RM Compare and AI - here are some of the common ones together with our responses

-

Response to the DfE Policy Paper - Generative artificial intelligence (AI) in education (22 Jan 2025)

The UK Department for Education's guidance on generative AI in education, released today, emphasizes the importance of human expertise and judgment in the educational process.

-

Thinking Like a Connoisseur: RM Compare's Role in the Age of AI

In an era where artificial intelligence is rapidly transforming various aspects of our lives, the art of connoisseurship remains a uniquely human skill that is more valuable than ever.

-

Human Expertise Remains Crucial in Educational Assessment: Insights from Recent AI Research

A recent trial conducted by the Australian Securities and Investments Commission (ASIC) found that AI performed significantly worse than humans in summarizing complex documents. At RM Compare, we see these findings not as a setback, but as an opportunity to reinforce our commitment to human-centric assessment solutions.