Blog

Posts for category: Product

-

When you know, you know

We are living through an anxious moment in education. AI is reshaping what matters, curriculum is shifting under our feet, and many of the old certainties no longer feel certain at all. In that kind of climate, assessment can easily become another source of pressure: another place where people are asked to act with confidence before they have had the chance to build it. It does not have to be like that.

-

➿RM Compare|📳Live NOW (ALPHA) - New features

We continue to push forward with our work in ➿RM Compare|📳Live NOW, driven largely by user feedback. What to know more and take part? Get in touch. We have introduced a new Catalogue feature. In addition there is new Guild Knowledge functionality.

-

Curriculum change, AI and the race for 'Guild Knowledge'

Across most established curricula, something important already exists but is rarely named. Over time, teachers, examiners, parents and students have built a shared intuition for what “good” looks like: what a strong essay feels like, what a secure piece of maths work looks like, what “ready for the next stage” means in a subject. That shared intuition is what we have been calling Guild Knowledge.

-

Why 'Guild Knowledge' is your organisation's most valuable, and most overlooked asset

In the previous posts, we explored what Guild Knowledge is (the tacit, experience‑based ability to recognise quality) and showed what the research says about how it can be developed through Learning by Evaluating. This post makes the organisational case: what happens when Guild Knowledge is absent, why conventional responses make it worse, and how to build it deliberately and at scale.

-

The research behind Learning by Evaluating: Why RM Compare | ⏱️NOW works

In the last post, we introduced Assessment as Learning and the idea of Guild Knowledge - the tacit, experience‑based ability to recognise quality that experts build over time. RM Compare | ⏱️NOW gives people a short, structured way to test and develop that ability by estimating quality, making comparisons, and seeing how accurate their judgement is.

-

RM Compare | ⏱️NOW and Assessment as Learning: Building 'Guild Knowledge'

⏱️RM Compare | NOW isn't just a convenient demo or a quick assessment tool. It's a practical example of Assessment as Learning - a pedagogical approach that helps people develop what's called Guild Knowledge.

-

Existing customer announcement

RM Assessment, who provides RM Compare, is moving to a new legal entity from 1 June 2026.

-

Is your software high quality, how do you know – and does it matter?

When important decisions, results or reputations depend on a piece of software, “quality” stops being a nice‑to‑have. It becomes the question of whether that system will be there when people need it, and whether it can adapt as your needs change.

-

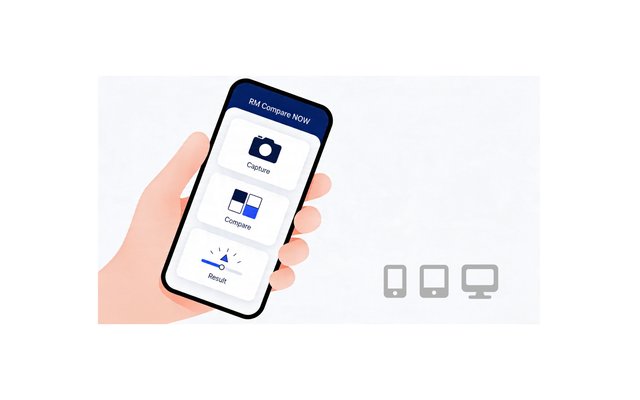

⏱️RM Compare | NOW is in ALPHA

We’re pleased to announce that ⏱️RM Compare | NOW is now in ALPHA. It is a lightweight RM Compare experience designed for quick judgements, allowing users to capture or upload an item, compare it to a ready-made standard, and review a score in just a few steps.