Blog

Posts for category: Research

-

From Steady State to Rulers: how RM Compare is building the future of shared standards

Assessment systems often talk about standards, but too often those standards remain abstract. Teachers, examiners and assessors are expected to align to a wider benchmark, yet in day-to-day practice they usually see only the work directly in front of them: their own class, their own cohort, their own centre. That gap matters.

-

When AI Beats Economists – And Why That’s Good News For Assessment

Not so long ago, the idea that an AI system could out‑analyse a room full of economists would have sounded like science fiction. Yet that’s exactly what a recentFederal Reserve working paper set out to test.

-

Time for Tea? Can AI spot a nice cuppa?

Just like everyone else in the UK we are getting very excited about National Tea Day which takes place on the 21st April. The day is a great opportunity to share tea brewing preferences, and the strength of the perfect 'cuppa' is always hotly debated. So we though it would be interesting to get the view of AI.

-

Using Comparative Judgement to keep exam grades fair when tests change (OFQUAL Research report 2025)

Every year, exams change. New papers are written, formats evolve, and sometimes whole qualifications are refreshed. Yet everyone from students, parents, teachers and universities still expects one simple promise to hold: a grade this year should mean the same as a grade last year. So what do you do then?

-

The OECD Just Mapped the Certification Problem. Here's the Solution.

A response to the OECD's The Theory and Practice of Upper Secondary Certification (2026). The OECD report has mapped the territory of the problem with exceptional care. The standardisation challenge is real, it is persistent, and it has defeated every country that has tried to solve it from within the existing paradigm. The solution is not a better mark scheme. It is a better question.

-

What the latest ACJ research means for real‑world assessment

A new study has given Adaptive Comparative Judgement (ACJ) one of its toughest tests yet: using it to assess long, complex law essays in a real university context. The results are encouraging for anyone interested in more reliable, fair and meaningful assessment – and they also highlight some very practical design questions we, as a community, need to solve together.

-

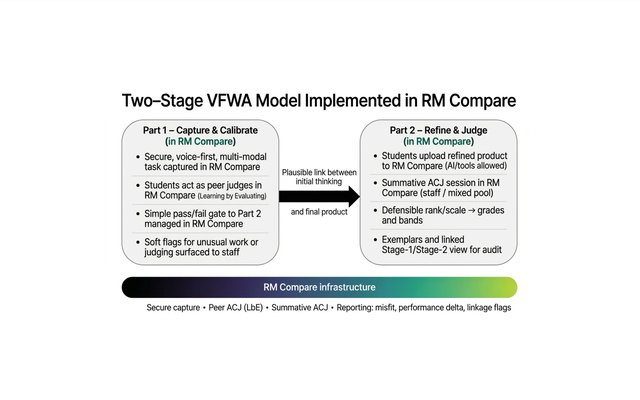

From Product to Process: How VFWA and RM Compare might reclaim academic integrity in the age of AI

Generative AI has broken one of higher education’s quiet assumptions: that a polished essay is a reliable proxy for student thinking. When tools can generate fluent academic prose on demand, we can no longer treat the final product as straightforward evidence of cognitive effort or authorship. The question for universities is no longer, “How can we prove this text wasn’t written by AI?” but “How can we design assessments where AI cannot replace the student’s contribution, only support it?”

-

When the Candidates Pass – But the Exam Doesn’t. How to rescue qualifications in the Age of AI

A City of London awarding organisation recently found itself in a strange position. Year after year, candidates were passing a respected Level 6/7 qualification. The statistics looked healthy, the quality assurance paperwork was in order and the exam board could point to detailed rubrics and grade descriptors. Yet employers were telling a different story.

-

Escaping the Text Trap: Why the Future of Assessment is Spatial

If you look at the current headlines in education, you’d be forgiven for thinking human intelligence is made entirely of words. We are currently locked in an arms race regarding "Text Intelligence." We worry about Large Language Models (LLMs) writing essays for students, and we counter by building AI tools to grade those essays. We are obsessed with the Linguistic Bottleneck - the idea that the only way to prove you understand the world is to write a description of it. But what if we are assessing the wrong intelligence entirely? What if the future of assessment isn't about better ways to read text, but better ways to see action?