- Opinion

Why Is the Assessment System Trying to Build Faster Horses – and What Can Be Done About It? (Part 2 / 4)

When a powerful new technology arrives, the first instinct is nearly always the same: use it to do the old job a bit better.

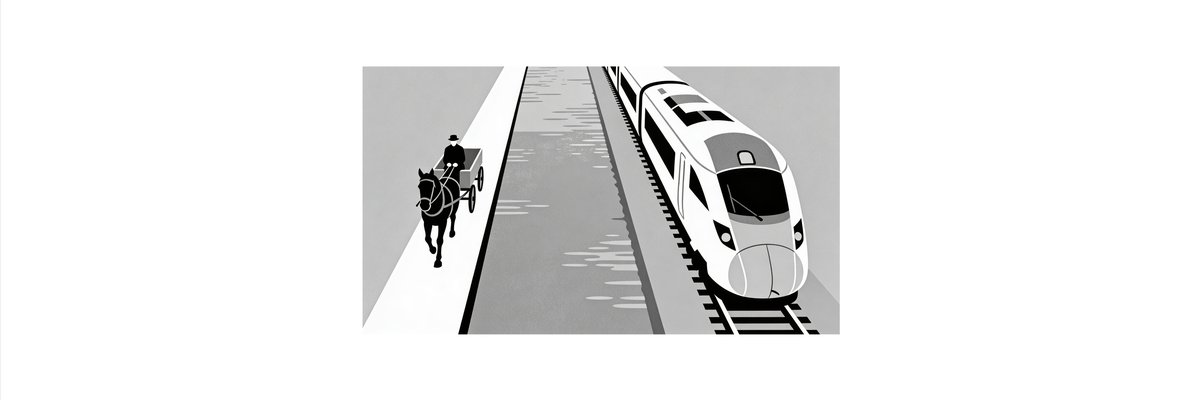

Early steam engines were bolted onto canal boats instead of horses. Today, autonomous driving is marketed as a convenience feature in conventional cars rather than a reason to rethink how mobility works. And in assessment, AI is being pulled into precisely the same pattern: a new engine strapped onto an old chassis.

The result is an assessment sector trying very hard to build faster horses.

Faster horses in an age of steam

History is full of examples of incumbents treating disruption as a speed or efficiency problem.

For a time, this works. Steam tugs really did pull boats faster than horses along canals. Early mainline railways still used horse‑drawn wagons in yards because that was how shunting had always been done. The new power source is made to behave like the old one because the surrounding infrastructure – canals, yards, regulations, habits – has not yet changed.

But over time, something else happens. The technology shows what it really wants.

Steam wanted rails, gradients, new routes, new timetables. Once railways and steamships exploited that logic fully, canals and horse traction didn’t disappear, but they moved to the margins. The centre of gravity shifted to an architecture that matched the new capabilities and the new environment.

The question for assessment is simple: is AI being treated more like steam tugs on canals, or more like the start of the railways?

How assessment is building faster horses

Look at how most large assessment providers and regulators are currently talking about AI:

- As a way to speed up marking: pre‑marking scripts, suggesting marks for human confirmation, or taking the strain on low‑stakes tasks so humans can focus on high‑stakes ones.

- As a way to scale existing tests: generating more items, providing instant feedback, extending familiar exam formats into online environments.

- As a way to police the boundaries: detecting plagiarism, spotting anomalies in scripts, enforcing rules about “unauthorised assistance”.

The underlying assumptions stay the same:

- An exam is still a timed event where individuals produce scripts.

- Marks are still the primary unit of evidence.

- Grades are still the main way outcomes are communicated and decisions are made.

AI here is a better horse: stronger, faster, less tired, sometimes more consistent. The canal is the existing exam‑and‑mark infrastructure. The towpath is the regulatory and accountability framework that channels everything into grades.

From the inside, this feels prudent and responsible. There are real concerns about fairness, bias, transparency and trust. Bolting AI onto existing processes looks safer than rethinking the processes themselves.

The problem is not that any of this is wrong in the short term. The problem is that it might be all that happens.

Why faster horses eventually lose

The pattern from other domains is clear. Faster horses lose when three things line up:

- The environment changes.

The context in which the system operates becomes more complex, volatile or interconnected than the old architecture was designed to handle. For assessment, that means learners working with AI tools as a matter of course, knowledge changing quickly, and valuable capabilities showing up in complex, collaborative, real‑world tasks rather than in isolated scripts. - The old architecture becomes visibly inadequate.

The system starts to fail on its own terms. In assessment, this looks like grades that no longer differentiate meaningfully between candidates, tasks that are easily gamed or solved by generic AI, and growing doubt that traditional exams tell us much about what people can actually do. - Parallel architectures quietly outcompete it.

New ways of evidencing capability emerge alongside the old ones: rich portfolios, project‑based selection, ongoing professional assessments, networked reputations. At first they are niche. Over time they become more predictive, more trusted in certain contexts, and more aligned with the skills that matter.

When those three forces reinforce each other, the system that invested only in faster horses finds that it owns the wrong infrastructure. The problem is no longer speed. It is shape.

Assessment is not at that point yet. But the early signs are visible: employers paying more attention to real work and portfolios, universities experimenting with authentic tasks and longer projects, skills systems wrestling with how to assess complex, AI‑mediated performance.

The deeper issue: complicated vs complex

Underneath the faster horse problem sits a deeper mismatch between two ways of seeing the world.

- A complicated system can be decomposed into parts. If you understand the parts and how they fit, you can predict and control the whole. This is where marking schemes, item banks and psychometric models shine.

- A complex system is adaptive, path‑dependent and full of feedback loops. New behaviours emerge from interactions. You cannot understand or steer it just by inspecting isolated components.

Industrial‑age assessment is a technology for a complicated world. It assumes stable constructs, bounded domains, and performances that can be sampled in a few hours under controlled conditions. It relies on the idea that if you define the parts carefully enough and count them reliably enough, the resulting grade is a good summary of capability.

AI pushes in the opposite direction. It blurs the boundaries between human and tool, makes knowledge more fluid, and embeds people in ever‑richer networks of collaboration and assistance. It makes performance more context‑dependent, not less. It shines in the messy middle of real tasks rather than in artificial isolation.

A system designed to measure complicated things is now being asked to make sense of complex ones. Making that system faster does not solve the mismatch. In some ways, it makes it worse, because it increases confidence in numbers that are less and less connected to what actually matters.

If not faster horses, what?

If building faster horses is not enough, what is the alternative?

The alternative is not to abandon structure or evidence, and it is not to reject AI. It is to change the job that assessment is being asked to do and the architecture used to do it.

That means, among other things:

- Shifting from one‑off events to ongoing trajectories: seeing learning and capability as patterns over time, across tasks and contexts.

- Moving from isolated scripts to networks of evidence: portfolios of work, performances in authentic settings, collaborative outputs, and the way people work with AI tools.

- Treating marks and grades as projections, not the core reality: useful in some contexts, but not the only or even the primary way to represent what someone can do.

- Making space for structured human judgement to sit at the centre again, supported (but not replaced) by AI: experts, peers and even AI agents comparing, disputing and refining judgements rather than simply ticking boxes.

This is where comparative judgement and modern platforms for orchestrating it matter. They offer a way to treat quality as relational and emergent again, while still producing defensible, scalable results. They are closer to railways than to steam tugs: a new architecture that assumes the world is complex and that judgement is something to be organised, not eliminated.

What can be done now?

If the assessment system is currently trying to build faster horses, there are still constructive steps to take. A start would be being explicit about “AI as faster horse” helps stakeholders see that using AI only to speed up marking is a strategic choice, not an inevitability.

The first disruption pulled assessment into an industrial, complicated world and gave us marking as the central technology. The second disruption is opening up a complex world in which that technology may no longer be enough.

Faster horses will get the system through the next few years. New tracks will decide what comes after.