- Opinion

Gradually then suddenly (Part 4 / 4)

If the first post in this series traced the industrial birth of marking, the second described the temptation to use AI as a faster horse, and the third argued for rediscovering human judgement, this final post asks the practical question: what would assessment look like if it were designed for the world now emerging rather than the one that produced marks and grades?

That question matters because the existing system still behaves as if the central task of assessment is to inspect a script, allocate marks and issue a grade. For some purposes that model will survive for a while yet, because institutions, regulators and public expectations remain heavily invested in it. But once AI becomes normal infrastructure for learning and work, the limits of that model become harder to ignore. The issue is not simply that AI makes cheating easier. It is that AI reveals how narrow the dominant conception of assessment has been. When capability is distributed across people, tools, contexts and time, a one-off marked performance stops looking like the natural centre of the system.

A post-industrial architecture for assessment begins by accepting that reality instead of fighting it.

From events to trajectories

Industrial assessment is built around events. A candidate sits an exam, submits a script or completes a controlled task, and the result is captured in a mark or grade that travels forward as a summary of what happened. The emerging world points in a different direction. Capability is increasingly revealed over time: in drafts, revisions, responses to feedback, collaborative work, project choices and the ways people use tools intelligently and responsibly.

In such a setting, the most meaningful question is often not “What did this person score on that day?” but “What kind of trajectory is visible here?” A post-industrial system therefore pays much more attention to sequences. It looks for patterns of growth, consistency, originality, judgement and transfer across tasks and contexts. It assumes that the best evidence of capability is often cumulative rather than episodic. This is already visible in discussions of AI-infused learning environments, where continuous assessment and portfolios are increasingly seen as better aligned with real learning than single end-point measures. UNESCO’s work on AI and the futures of learning points in the same direction, emphasising that education systems must think beyond narrow testing and toward the broader competencies people need to use AI meaningfully and ethically.

This does not mean abandoning decisive moments. There will still be points where decisions have to be made, thresholds crossed and credentials awarded. But those decisions can be grounded in richer trajectories of evidence rather than in isolated performances pretending to stand for the whole.

From scripts to evidence ecosystems

Once trajectories matter, the basic unit of assessment changes. The script is no longer enough.

A post-industrial assessment architecture works with an ecosystem of evidence. That evidence may include essays, design portfolios, performances, oral defences, workplace simulations, collaborative artefacts, reflections, annotated revisions and logs of how AI tools were used in the process. The point is not to collect everything indiscriminately. It is to recognise that capability shows itself differently across different settings, and that assessment becomes more trustworthy when it can see those settings together rather than flattening them into one kind of output.

This is one reason comparative judgement has growing significance in an AI-disrupted landscape. In portfolio-based or creative settings, comparative judgement shifts attention back to authentic work and asks experts to evaluate quality in the round rather than forcing everything through a standardised rubric. That is particularly useful where AI can easily help polish a single artefact but has a harder time fabricating a sustained body of coherent work across time, media and context.

The phrase “evidence ecosystem” matters because it suggests relationships rather than accumulation. Different forms of evidence do not all need to collapse into one score. They can illuminate each other. A portfolio may show craft, an oral discussion may reveal understanding, a simulation may expose decision-making under pressure, and a series of drafts may show responsiveness to critique. The goal is not maximal data collection. It is more truthful representation.

From marks to profiles

In the industrial model, marks and grades are treated as the destination. Everything else is scaffolding on the way to the number.

In a post-industrial model, the number becomes one possible projection of a richer picture. There may still be moments when a grade is required, because institutions and systems need shorthand. But the grade no longer pretends to be the full truth. It sits alongside a profile of judgement that can show where strengths lie, where growth is happening, where performance is unstable and what kinds of context matter most.

This change is subtle but profound. A mark tells you where someone landed in one measurement frame. A profile tells you more about how that landing was achieved and what it means. It acknowledges that capability is multi-dimensional and that what matters may not always be fully specifiable in advance.

This is already implicit in emerging discussions about assessment reform. Global reviews of AI in assessment design increasingly point toward more diverse evidence, richer representations of student performance and less dependence on traditional single-mode testing. The same shift appears in professional domains beyond education. In architecture, for example, AI is expected to increase the value of human communication, ethical judgement and critical thinking, precisely because the measurable routine parts of work are becoming easier to automate. Assessment that continues to compress those qualities into narrow numerical signals will become progressively less informative.

Human judgement as infrastructure

The decisive break with industrial thinking comes when human judgement is no longer treated as a regrettable source of noise in an otherwise perfect measurement system. Instead, it becomes the infrastructure around which the system is designed.

This does not mean casual subjectivity or elite intuition operating without accountability. It means recognising that in complex domains, the most defensible assessment often comes from structured communities of expert judgement. Comparative judgement offers one route into that world because it makes holistic decisions scalable and auditable. The resulting scale is not handed down in advance by a mark scheme. It is built from the pattern of judgements themselves.

That principle can be extended more widely. A post-industrial system can make room for multiple human perspectives, visible disagreement, moderated discussion and evolving exemplars. It can capture not just the outcome of judgement but the conversation around it. Rather than forcing assessors to hide their reasoning inside a number, it can allow institutions to see where consensus is strong, where it is fragile and where standards are themselves moving.

This is especially important in the presence of AI. When generated outputs become more plausible, human assessors are needed not just as final sign-off points but as interpreters of what matters, what counts as originality, what kinds of assistance are legitimate and what sorts of performance reveal genuine capability. Those are not peripheral tasks. They are the core of fair assessment in an AI-rich world.

AI as participant, not just marker

The industrial instinct is to ask whether AI can mark like a human marker. The post-industrial question is different. It asks how AI can participate in an ecosystem of assessment without becoming the sole source of judgement.

In such an architecture, AI has many possible roles. It can help generate richer tasks and simulations, identify where more evidence may be needed, surface anomalies, suggest informative comparisons and support the management of large evidence sets. It can help candidates reflect on their work and help assessors see patterns they might otherwise miss. UNESCO’s framing of AI and the futures of learning highlights exactly this broader challenge: not simply integrating AI into existing educational routines, but shaping the human and technological conditions under which people can use it safely, ethically and meaningfully.

What AI should not do is lure assessment back into the fantasy that quality can be fully captured by an automated score. The more capable AI becomes, the more tempting that fantasy will be. But professional domains already offer a better analogy. In architecture, for instance, AI is increasingly seen as useful for analysis and support while final decisions still depend on professional judgement, creativity and ethical reasoning. Assessment should learn from that. The task is not to pretend AI can replace judgement, but to use it to make judgement more informed, more scalable and more transparent.

What this means for institutions

None of this implies that every school, university or awarding body can simply discard marks next year. Systems change unevenly. Some industrial forms will remain, particularly where public trust and regulatory simplicity matter. The point is not to demand instant revolution in every setting. It is to begin building the architecture that the next decade will require.

That means institutions need to invest in the capacity to work with richer forms of evidence. They need assessment methods that can cope with authentic work, portfolios and open-ended performance. They need governance models that can handle human judgement seriously rather than treating it as an embarrassing residue. And they need technological platforms that support these practices rather than dragging everything back toward the single score.

This is where the future of assessment becomes a design problem, not merely a policy one. The question is no longer whether AI will affect assessment. It already has. The question is what kind of structures will organise the interaction between human judgement, machine capability and authentic evidence. Reports on the future of legacy assessment organisations increasingly acknowledge that this future is uncertain as digital and AI-supported forms expand and the centre of gravity begins to shift.

Gradually, then suddenly

One reason incumbent systems misread disruption is that it rarely looks revolutionary at first. For years, it appears as a scattering of pilots, awkward experiments and niche alternatives. AI helps with item generation here, a portfolio route appears there, and a few institutions begin to rely more on projects, oral defences or richer evidence trails. From the centre of the system, nothing much seems to have changed.

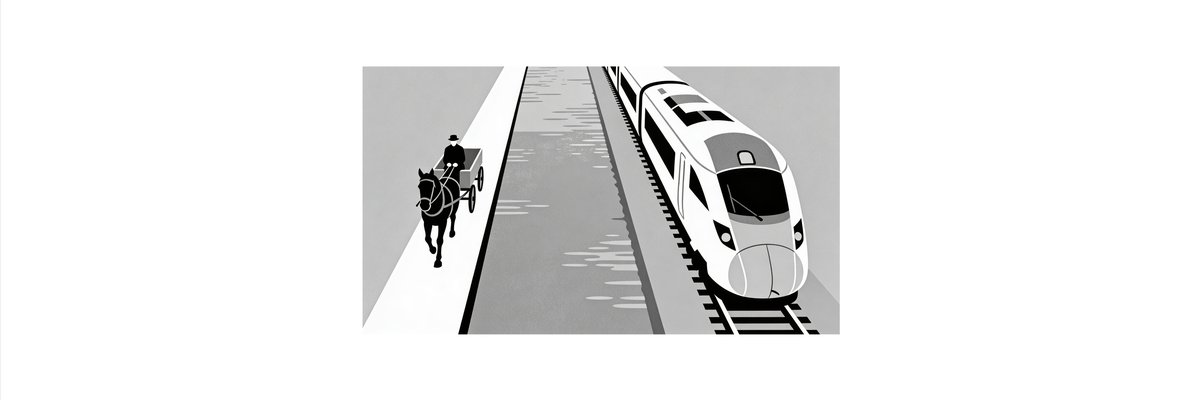

That is exactly what makes disruption dangerous. Ernest Hemingway’s line about bankruptcy — “Two ways. Gradually, then suddenly.” — captures how institutional change often feels from the inside. The visible collapse comes late. The real shift happens earlier, while incumbents are still telling themselves that the new tools can be contained inside the old architecture.

Assessment may follow the same pattern. For a while, AI will look like an add-on to existing systems: a support for marking, a way of generating more questions and a tool for identifying anomalies. But if employers, universities and regulators begin to place more value on richer, longitudinal evidence; if traditional scripts become less credible as indicators of independent capability; and if human judgement supported by technology proves more useful than industrial marking in complex domains, then the centre of gravity could move quickly.

When that happens, the change will be described as sudden. In reality, it will have been years in the making.

Choosing the gradual phase well

This matters because the gradual phase is when architecture is chosen. It is when institutions decide whether AI will be used to reinforce the exam-mark-grade regime or to build something better around human judgement, richer evidence and more adaptive forms of assessment. By the time the shift becomes obvious, the important design choices may already have been made.

That is the real choice facing assessment now. The sector can continue to optimise the old machine and hope that speed will compensate for a growing mismatch with the world outside. Or it can recognise that the gradual phase of disruption is exactly when new tracks must be laid.

The industrial age made marking central because it solved the problems of its time. The AI age will reward systems that can go beyond marking without losing fairness, structure or trust. The transition may feel slow for longer than expected. But history suggests that once the threshold is crossed, it will not feel slow at all.