- Opinion

Rediscovering Human Judgement in an Age of AI (Part 3 / 4)

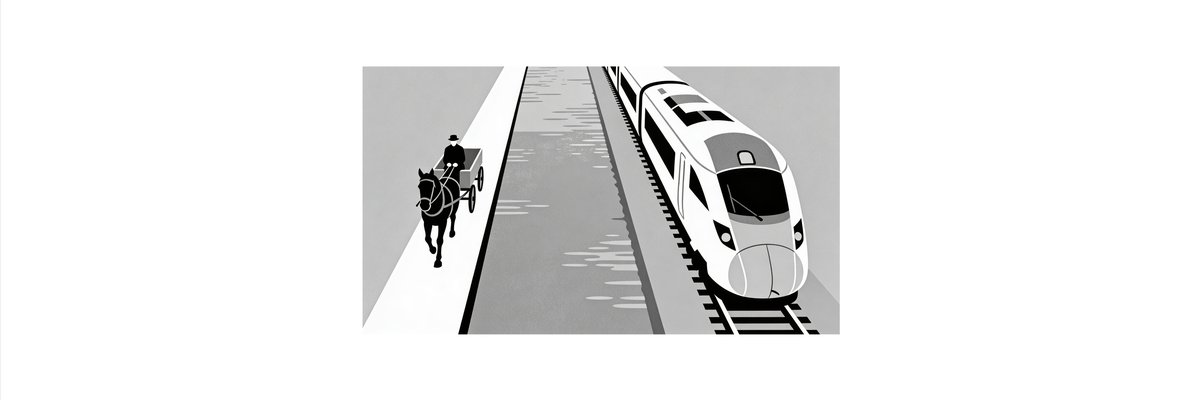

If the first post argued that marking is a child of the Industrial Revolution, and the second showed how AI is mostly being used to build faster horses, this third post is about something older and more fundamental: human judgement itself. Long before there were mark schemes, examiners and scholars were already very good at something that today’s assessment system largely hides: deciding, in context, which performance is better, and being willing to argue about why.

In an AI‑saturated world, that ability is not a quaint relic. It may be the one thing assessment cannot afford to lose.

Humans are better at “which is better?” than “what mark is this?”

Anyone who has moderated marking knows the pattern. Ask a group of experienced teachers to decide whether Essay A is better than Essay B and you get quick, confident convergence. Ask them to give Essay A a mark out of 40 in isolation and you get an untidy spread. The same is true in many domains: experts agree more readily on relative quality than on precise numerical scores.

That is the intuition behind comparative judgement. Instead of forcing assessors to assign absolute marks, comparative judgement asks them to make a series of paired comparisons: between these two pieces of work, which is better overall? Each judgement is simple, holistic and contextual. It appeals to the way expertise actually feels from the inside, where reasons for a decision are often a tangle of tacit knowledge, pattern recognition and past experience.

What makes this powerful now is that technology can turn many such pairwise choices into something stable and usable. Given enough comparisons, it is possible to build a scale on which each piece of work finds a position, not because it has been assigned a mark, but because it has been repeatedly compared and found better than some work and not as good as others. Quality emerges from a network of decisions, not from a single pass with a mark scheme.

That is a very different picture from traditional marking. It does not ask assessors to carve reality into boxes and numbers and then pretend those numbers are the thing that mattered all along. It asks them to do something more natural, and then uses computation to make sense of the pattern.

Disputation: disagreement as a feature, not a bug

There is a second, older tradition worth reclaiming: disputation. In medieval and early modern universities, disputation was a central academic practice. Candidates did not simply write alone in silence; they defended theses, responded to objections and engaged in argument. Assessment here was not a one‑way act of inspection. It was a structured conversation in which reasoning, responsiveness and intellectual character were visible.

Modern assessment has pushed most of this underground. Disagreement between markers is usually treated as error to be minimised. Appeals and challenges are seen as exceptions to be processed, not as normal parts of how knowledge and quality are established. The result is a system that aspires to smooth, silent consensus. Marks are communicated; the arguments that could have generated them are mostly lost.

In a complex world, that smoothness is misleading. Where problems are open‑ended and contexts change quickly, good judgement is rarely unanimous. It is often contested, provisional and revisable. Making disagreement visible can be more informative than forcing it underground. If two experienced assessors consistently disagree about certain kinds of work, perhaps the work is genuinely boundary‑pushing, or the criteria are unstable, or the context has shifted. Treating that disagreement as noise throws away signal the system badly needs.

Disputation offers a different ethic. It says that judgement is sharpened in dialogue and that the reasons for a decision matter as much as the decision itself. In the age of AI, when systems can produce plausible answers without understanding, that ethic is a useful counterpoint. It puts weight back on explanation, critique and the ability to defend a view under scrutiny.

Why this fits an AI‑rich, complex world

AI changes the landscape in two ways. First, it makes it easier than ever to produce fluent, superficially impressive work on demand. Second, it can itself be a participant in assessment: as a tool, as a source of feedback, and as a generator of new situations in which capability is revealed.

Those shifts undermine the idea that a single, time‑limited script can reliably stand for what a person knows and can do. They also undermine the comfort that comes from having every mark trace back to a neat criterion. If a general‑purpose model can tick many of the same boxes as a human, then the boxes themselves may be the wrong ones.

Comparative judgement responds by insisting that quality is relational. A piece of work is not judged in isolation, but against a living standard made up of other work. If AI‑generated answers flood the system, they simply become part of that reference set: sometimes better, sometimes worse, but always in relation to what else is possible. The question shifts from “does this meet the rubric?” to “is this better than what we are already seeing?”. That is a more robust way to operate in a fast‑moving space.

Disputation responds by insisting that understanding is more than production. It invites candidates, assessors and, potentially, AI systems into conversations where reasons are exchanged, positions challenged and weaknesses exposed. An answer that looks strong on the surface can be tested under pressure. An AI tool that produces good first drafts can be part of that process, but it cannot substitute for the human capacity to notice what is interesting, troubling or genuinely new.

Together, these approaches treat assessment as part of an ongoing process of making sense of complex performances, not as a one‑off act of measurement. They fit naturally with the idea that humans and AI will work together, that learning will be continuous, and that what matters cannot always be fully specified in advance.

Technology as scaffolding for judgement, not a replacement

It is tempting to think of technology in assessment in purely automating terms. If a model can approximate human marking on a set of scripts, why not let it take over, at least in low‑stakes contexts? But this view is still bound to the industrial logic of marking. It asks, “Can the machine do what the human marker does?” and stops there.

A different question is possible: “How can technology help organise and amplify human judgement so that it can handle complexity at scale?”

Comparative judgement platforms already gesture in this direction. They anonymise and route work to judges, ensure that each performance is seen enough times and in varied pairings, and produce a coherent scale and reliability evidence from the resulting network of decisions. They do not tell experts what to think; they make it possible for many small, local acts of judgement to add up to something stable and useful.

The same infrastructure can support disputation. It can connect assessors who disagree, surface cases where decisions are unstable, and make it easy to attach commentary, counter‑examples and evolving exemplars to particular regions of a scale. Over time, the system does not simply hold scores. It holds a living map of how a community understands quality, where its disagreements lie, and how that understanding has shifted.

AI can sit alongside this in multiple roles. It can propose pairings that are likely to be informative, suggest where additional judgements would add the most value, or draft explanations and counter‑arguments that humans refine. It can simulate edge cases, help visualise trajectories, and detect patterns in how judgements change over time. Crucially, it does these things in service of human judgement, not as its replacement.

In that vision, the central act of assessment is not the mark. It is the judgement, situated in a web of other judgements and supported by tools that help a community see and respond to its own thinking.

Towards a new centre of gravity

If the Industrial Revolution pulled assessment away from disputation and towards marking, the AI revolution offers a chance to move back, but with better tools. The aim is not to recreate some imagined golden age of oral examinations and closed scholarly circles. It is to put expert human judgement back at the centre, in forms that are transparent, scalable and open to scrutiny.

That will not happen by accident. The easier path is the one already being taken: use AI to prop up the mark‑based system for as long as possible and hope its cracks can be patched. But there is another path, and it starts by treating human comparative judgement and structured disagreement as the core assets, not as sources of noise.

In the final post, the focus will shift from methods to architecture. If comparative judgement and disputation are the building blocks, what kind of assessment ecosystem can be assembled from them? How might evidence flow, how might AI and humans collaborate, and what would it mean for marks and grades to become one output among many rather than the organising centre?

Those are questions for next time. For now, the key point is simple: in a complex, AI‑rich world, assessment will stand or fall on the quality of human judgement it can mobilise. The task is not to make that judgement disappear behind ever more sophisticated machinery. It is to design systems in which it can do its best work.