Blog

Posts for category: Opinion

-

Resilience Is No Longer Enough: Why Curriculum and Assessment Need Antifragility

For years, we’ve been told that education systems need to be more “resilient”. Resilient curriculum. Resilient assessment. Resilient students. In a world of AI, that sounds reassuring, but it quietly sets the wrong ambition. Resilience, in the way we usually use it, means being able to withstand shocks and stay the same. The problem is that AI is not a one‑off storm. It is a permanent change in the climate.

-

Assessing Big Ideas: Why ACJ Belongs with UbD

UbD (Understanding by Design®) encourages teachers to design authentic performance tasks: essays, presentations, podcasts, investigations, portfolios, prototypes, and other complex responses that make big ideas visible. But when that work has to be judged, the system often falls back on tools and habits better suited to narrower, more standardised forms of assessment.

-

From Medieval Guilds to AI: How Guild Knowledge is Becoming the Missing Piece for Modern Learning

Walk into a medieval guildhall and a modern AI lab and, on the surface, they could not be more different. One smells of timber, leather and metal; the other of coffee and electronics. Yet both are wrestling with the same underlying question: how does a community grow and protect its shared sense of what “good” looks like – and keep that sense of quality alive when everything around it is changing?

-

For 500 years, Guilds were how expertise worked. Then we forgot. Now we're remembering.

The craft guilds of medieval Europe such as goldsmiths, weavers, stonemasons and apothecaries are often remembered as protectionist trade associations. That reputation is not entirely undeserved. But it obscures something more important: they were the most sophisticated knowledge transmission institutions the pre-modern world produced.

-

VAR, Kayfabe and Why Assessment can Feel Fake

Rick Rubin once said that professional wrestling is “real” and everything else is fake. Wrestling is honest about its kayfabe – the shared pretence that what you’re watching is a contest, even though everyone involved knows it’s scripted. The fiction is part of the product.

-

When the Black Box Gets Good

For a long time, the debate about AI in assessment focused on capability. Could AI produce judgements that were reliable enough to take seriously? Could it handle open-ended responses, complex performances, or evidence of learning that did not fit neatly into a mark scheme?

-

Newsletter May 2026

RM Compare launches a new era of on-demand Comparative Judgement. There has never been a more uncertain time in education. There has never been a more exciting time to be in education.

-

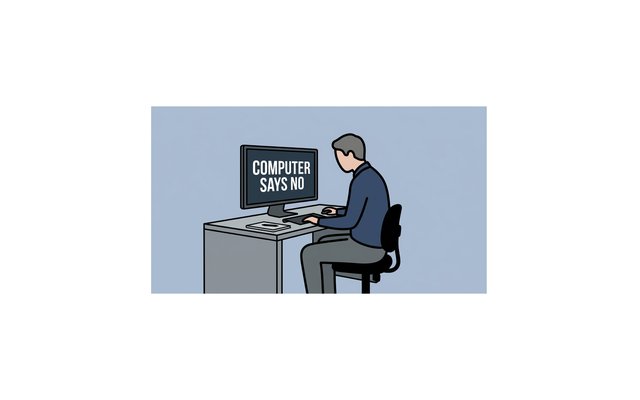

Lessons from Software Engineering: Why AI in Assessment May Be Solving the Wrong Problem

Every few years, a body of evidence emerges from an unexpected direction that turns out to be exactly what education needed to hear. We think this might be one of those moments.

-

AI Assessment has a dignity problem - here's how to fix it

AI assessment has a dignity problem. Not a technology problem, or even just a fairness problem, but a problem with how it treats people at precisely the moments they are most exposed and most human.