- Product

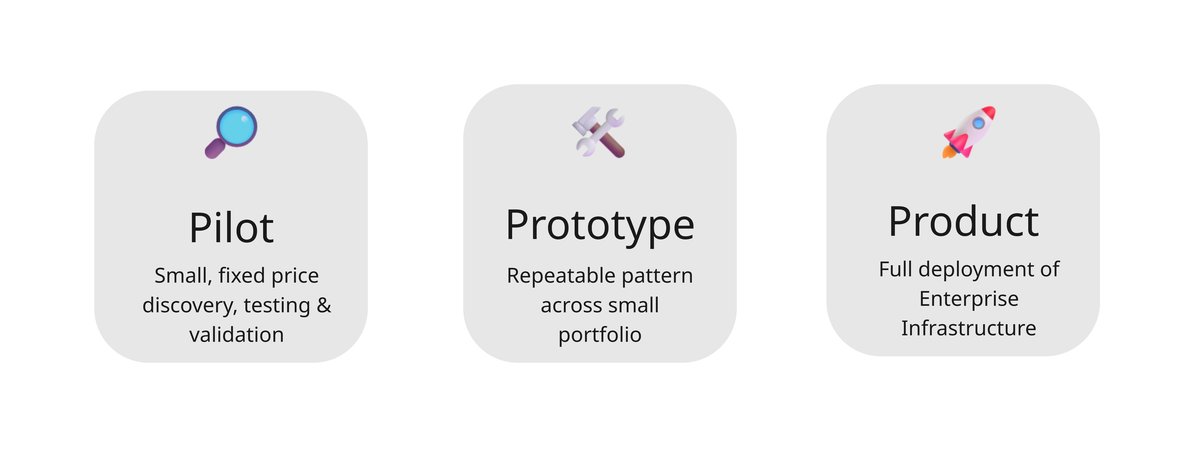

Pilot > Prototype > Product

We are with you all of the way - working with Enterprise

Exam Board / Awarding Org example

Pilot – Validate the idea

Start with a fixed‑price pilot on a single component or programme.

We run a parallel ACJ session, audit your current standards, and give you clear reliability and bias evidence you can share internally.

Prototype – Prove the pattern

Next, we scale a proven pattern across a small portfolio.

Using the same platform and APIs, we test how ACJ or AI Validation works operationally across more centres, markers and cohorts.

Product – Embed the infrastructure

Finally, RM Compare becomes part of your regular assessment toolbox.

You move to an enterprise subscription with judgement‑capacity bands, using 💻|Studio, 🔗|Hub and 📳|Live for standards, moderation and validation every year.

Pilot / Prototype Example

ACJ Pilot & Standards

- Single component or coursework/practical.

- Design of sampling and ACJ session.

- Ranked ruler, cut‑point proposal, exemplar set, reliability summary.

Examiner Calibration & Moderation

- ACJ‑based calibration events for examiners.

- Outlier and consistency reporting.

- Exemplar packs you can reuse in your own systems.

AI Validation Study

- Compare AI‑generated marks with human ACJ judgements.

- Bias, drift and accuracy analysis.

- Clear recommendations on safe use and governance.

(All packages are delivered using the standard RM Compare platform and APIs; no custom forks.)

Partner with us

We work in cross‑functional teams with your staff and academic partners.

Our goal is for you to own the “final mile” locally, while RM Compare provides the judgement infrastructure and patterns you can reuse nationally and internationally.