Newsletter May 2026

There has never been a more uncertain time in education. There has never been a more exciting time to be in education.

There has never been a more uncertain time in education. There has never been a more exciting time to be in education.

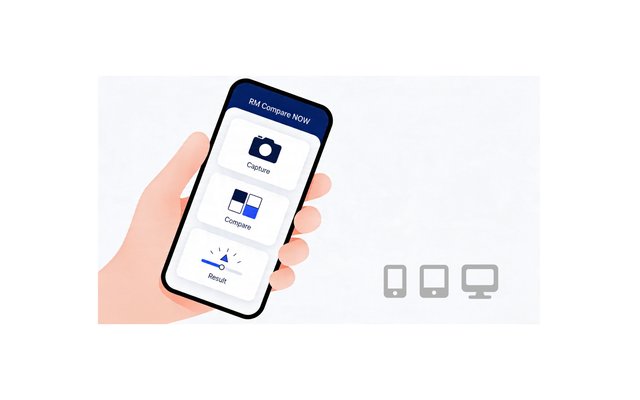

We’re pleased to announce that ⏱️RM Compare | NOW is now in BETA. It is a lightweight RM Compare experience designed for quick judgements, allowing users to capture or upload an item, compare it to a ready-made standard, and review a score in just a few steps.

Just like everyone else in the UK we are getting very excited about National Tea Day which takes place on the 21st April. The day is a great opportunity to share tea brewing preferences, and the strength of the perfect 'cuppa' is always hotly debated. So we though it would be interesting to get the view of AI.

In 1792, revolutionaries in Paris abolished a king, Americans calmly re‑elected a president, and Cambridge quietly invented something that still shapes millions of lives every year: exam marking. While politics and industry were being rebuilt in public, assessment was being rebuilt on paper. Two centuries later, we are still living inside that decision – and only now starting to see its limits.

Early steam engines were bolted onto canal boats instead of horses. Today, autonomous driving is marketed as a convenience feature in conventional cars rather than a reason to rethink how mobility works. And in assessment, AI is being pulled into precisely the same pattern: a new engine strapped onto an old chassis.

For more than 200 years, formal assessment has been organised around a deceptively simple idea: break performance into parts, assign marks, add them up, and trust the resulting number. Written exams and numerical grading feel so natural that it is hard to imagine assessment working any other way.

Generative and agentic AI are exposing the limits of assessment systems built for a more predictable, more mechanical world. In response, much of the assessment sector is treating AI as a way to mark faster, moderate more cheaply, and preserve the familiar exam–mark–grade machinery. This will not end well.

When people first encounter RM Compare, they usually see it as what our homepage says it is: the world‑leading Adaptive Comparative Judgement system. That description is accurate, but as customers start integrating RM Compare more deeply, a different question often emerges: is this just another application we use, or is it actually part of our assessment infrastructure?

Not so long ago, the idea that an AI system could out‑analyse a room full of economists would have sounded like science fiction. Yet that’s exactly what a recentFederal Reserve working paper set out to test.

A lightweight RM Compare experience for quick judgements. Capture or upload an item, compare it to a ready-made standard, and review your score in just a few steps.

Just like everyone else in the UK we are getting very excited about National Tea Day which takes place on the 21st April. The day is a great opportunity to share tea brewing preferences, and the strength of the perfect 'cuppa' is always hotly debated. So we though it would be interesting to get the view of AI.

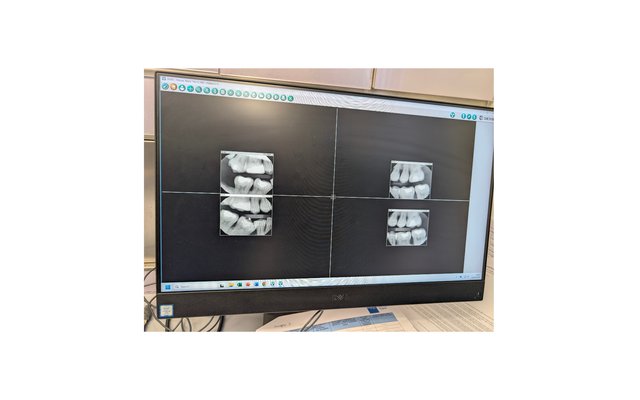

This morning I went for a routine dental check‑up. What struck me was how naturally my dentist fell into a comparative frame. He wasn’t mentally ticking boxes on a rubric. He was looking at two artefacts and asking, “Which represents a healthier, more stable situation?”

The story of the Barometer Question is usually told as a joke at the expense of an inflexible examiner. But if you look at it through an assessment lens, it is really a story about design failure, construct clarity, and the importance of a strong holistic statement of quality.