- Product

RM Compare Roadmap

What's Now, Next and Later

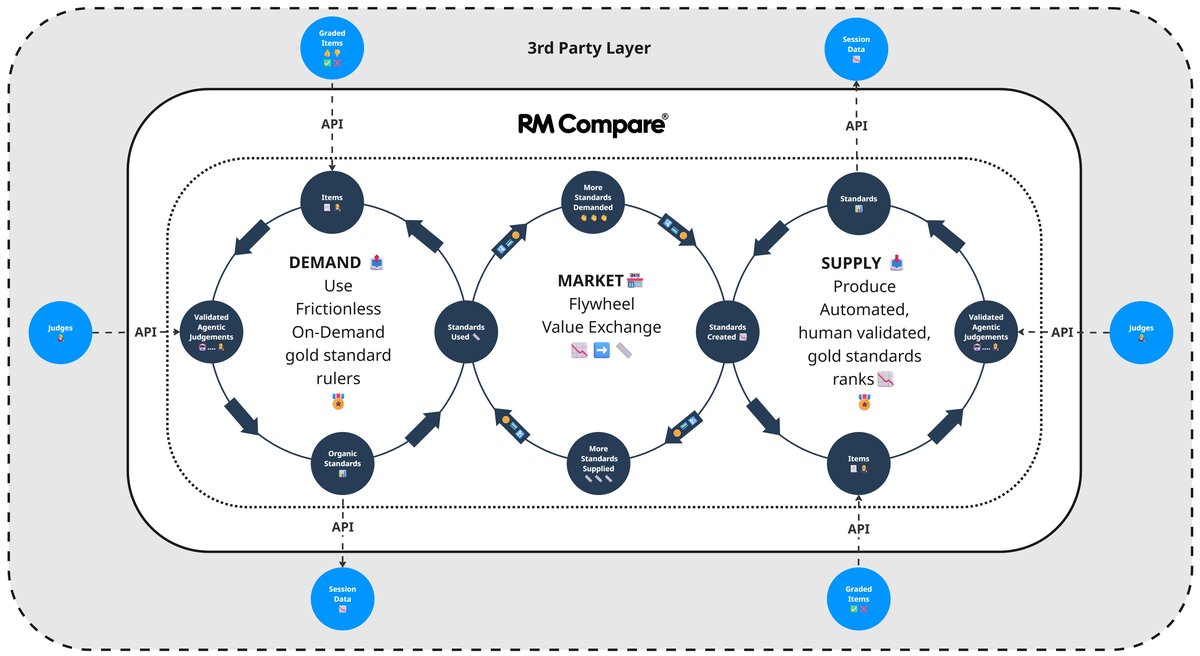

RM Compare takes a 'continuous' approach to innovation. We don’t just release versions; we evolve our ecosystem to consistently deliver deeper value.

Our roadmap focuses on strengthening RM Compare as assessment infrastructure – the platform others use to create, share and apply standards at scale. These investments build a shared standards library and, over time, enable public and private marketplaces of rulers.

Visit this page regularly to keep updated and sign up for the newsletter.

What WE ARE working on Now (The Capture Layer)

- Multi-Format Capture: Streamlining the recording of authentic evidence (audio, video, and rich media) at the moment learning happens.

- On-Demand assessment: increase everyday use of Rulers on all device types to judge new material against trusted standards on-demand

What WE THINK we will be working on Next (Intelligent Insight)

- Item Author Users: Adding a new category of User

- Steady State I: allow us to ingest new items/tasks into an existing ruler and calibrate them onto the same scale, so the ruler becomes a “living” measurement instrument for a domain, not just for the original task set. This will let systems refresh tasks, align new curricula, and still report progress and standards against a single underlying ruler, moving us much closer to the full Steady State vision of a continuously maintained, multi‑task, multi‑cohort scale.

- Ecosystem Synergy: Deepening the connection with the RM Assessment platform RM Ava to create a unified journey from classroom capture to formal qualification.

- Datashare enhancements: supports safe sharing of rulers across licences (foundation for standards marketplace.

What WE MIGHT be working on Later (The Professional Compass)

- Steady State II: Longer‑term, we aim to support multiple interconnected rulers per domain, governed across all Editions and exposed via APIs, so partners and systems can treat RM Compare as standards infrastructure: they call our rulers when they need to establish or apply performance standards.

- System-Wide Visibility: Developing visualisation tools that transform individual captures into a strategic view of curriculum density for leadership.

- AI-Enhanced Judgment Synthesis: Leveraging Large Language Models (LLMs) to analyse the collective consensus of a session.

- National Standards Benchmarking: Secure, anonymised ways for organisations to align internal standards with a national consensus.

- The Sovereign Evidence Portfolio: A learner-owned digital vault providing a verifiable, judgment-led history of an individuals "Learning Journey."

- AI Validation Layer: Offering a calibration engine to test and verify AI‑assisted marking systems against trusted human ‘Gold Standards’, including repeated evaluation rounds as models and agents evolve.

- API-First Integration: Allowing partners to programmatically access our comparison engine, session metrics and ‘rulers’ within their own systems, including using RM Compare as an evaluation service for their AI agents

- Edge Intelligence & Local Inference: On-device and in-browser AI to enhance privacy and performance.

- Agent Evaluation & Alignment: Enabling organisations to benchmark and continuously tune AI agents (such as LLM‑based assistants and auto‑markers) against expert judgement, using large‑scale ranks and on‑going re‑evaluation rounds.

- Agentic Quality Assurance (The Intelligent Moderator): We are exploring the transition from passive data insights to Agentic AI. This system will act as a proactive digital moderator, autonomously monitoring live sessions to identify marker bias, flag inconsistent judgments, and suggest real-time interventions to protect the integrity of the national standard.

- Longitudinal Standards Calibration (Historical Mapping): To support long-term Sovereignty and Portability, we are investigating features that allow for "Standard-to-Standard" mapping. This would enable users to calibrate current session results against historical "Gold Standard" anchors, allowing for the precise tracking of standards and student progress over multi-year cycles.