Newsletter May 2026

There has never been a more uncertain time in education. There has never been a more exciting time to be in education.

There has never been a more uncertain time in education. There has never been a more exciting time to be in education.

We’re pleased to announce that ⏱️RM Compare | NOW is now in BETA. It is a lightweight RM Compare experience designed for quick judgements, allowing users to capture or upload an item, compare it to a ready-made standard, and review a score in just a few steps.

Just like everyone else in the UK we are getting very excited about National Tea Day which takes place on the 21st April. The day is a great opportunity to share tea brewing preferences, and the strength of the perfect 'cuppa' is always hotly debated. So we though it would be interesting to get the view of AI.

In 1792, revolutionaries in Paris abolished a king, Americans calmly re‑elected a president, and Cambridge quietly invented something that still shapes millions of lives every year: exam marking. While politics and industry were being rebuilt in public, assessment was being rebuilt on paper. Two centuries later, we are still living inside that decision – and only now starting to see its limits.

Over this series, we’ve tried to do three things: explain why UK copyright policy on AI is shifting, argue that student work should be treated as creative property rather than free fuel, and sketch when – if ever – training on that work might be legitimate.

So far in this series, we’ve argued that student work is creative property, not just “data”, and that assessment use and training use are not the same thing. That naturally leads to a harder question: is it ever acceptable to train models on student work – and if so, on what terms?

When people talk about AI in assessment, the conversation usually goes straight to marking accuracy, bias or workload. Underneath all of that sits a quieter question that is just as important: who actually owns the work being processed – and what does that ownership mean once AI is involved?

For a while, it looked like AI developers might get a broad “free pass” to train on almost anything they could scrape. That idea has now been quietly parked. The UK government’s latest report on copyright and artificial intelligence signals a very different direction – one that matters a lot if you’re responsible for student work and assessment.

Every year in England, some things in education feel almost guaranteed. Exam season will arrive on schedule, bringing with it the familiar mix of anxiety, hope and hard work in schools up and down the country. Ofqual will emphasise that our qualifications system is robustly designed, closely regulated and delivering grades that are fair and can be trusted. And Dennis Sherwood will publish fresh analysis arguing – drawing largely on Ofqual’s own technical reports – that neither the level of fairness nor the level of trust we assume is quite what it seems.

The latest OECD Reimagining Teaching in an Accelerating World report makes one thing very clear: teaching and assessment can’t stay as they are. As AI reshapes what students can do with a few prompts, the things that are easiest to test have become the easiest to automate. Systems everywhere are being pushed to value richer learning, trust professionals more, and treat AI as an educational ally rather than a threat.

A new study has given Adaptive Comparative Judgement (ACJ) one of its toughest tests yet: using it to assess long, complex law essays in a real university context. The results are encouraging for anyone interested in more reliable, fair and meaningful assessment – and they also highlight some very practical design questions we, as a community, need to solve together.

Generative AI has broken one of higher education’s quiet assumptions: that a polished essay is a reliable proxy for student thinking. When tools can generate fluent academic prose on demand, we can no longer treat the final product as straightforward evidence of cognitive effort or authorship. The question for universities is no longer, “How can we prove this text wasn’t written by AI?” but “How can we design assessments where AI cannot replace the student’s contribution, only support it?”

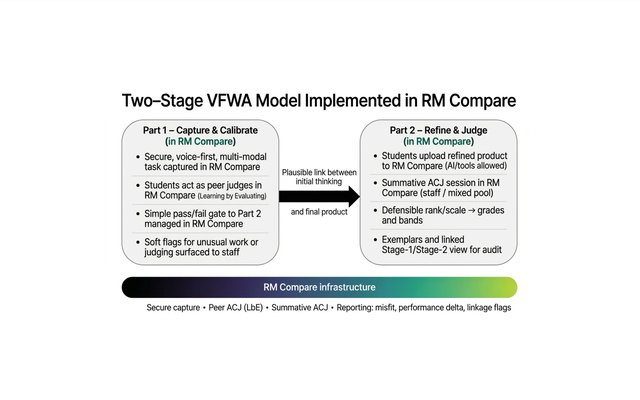

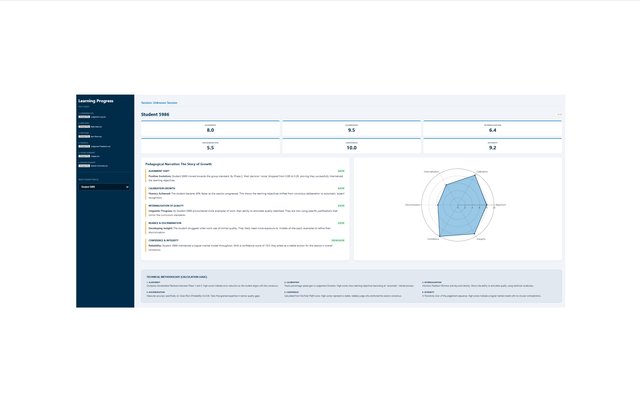

At RM Compare, we believe that the true value of Comparative Judgement isn't just found in the final rank order (the product), but in the cognitive journey students take to get there (the process). Today, we are excited to share an experimental piece of work: the Learning Progress Dashboard.