- Opinion

Newsletter May 2026

In this edition we are thrilled to announce the long awaited arrival of ⏱️RM Compare | NOW - a mobile first, on-demand Comparative Judgement experience.

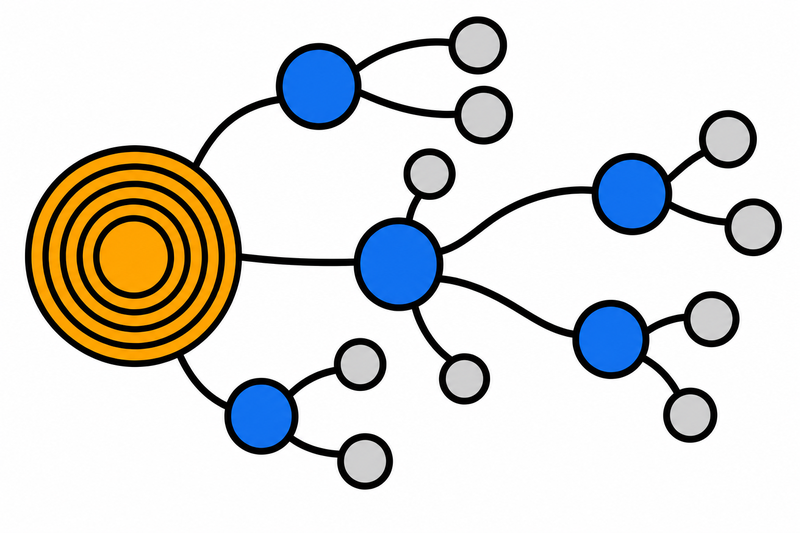

Our Ecosystem now provides exciting new capabilities to the end user and system managers. The value released allows forward thinking organisations to consider Comparative Judgement in a new light - the opportunities ahead are truly groundbreaking.

Things are currently in ALPHA - if you would like access simply Get in Touch.

We really are just getting started - check out the Roadmap to see where we might be heading next.

New Product Features and Functionality

⏱️RM Compare | NOW is in ALPHA

We’re pleased to announce that ⏱️RM Compare | NOW is in ALPHA. It is a lightweight RM Compare experience designed for quick judgements, allowing users to capture or upload an item, compare it to a ready-made standard, and review a score in just a few steps.

From Steady State to Rulers: how RM Compare is building the future of shared standards

Assessment systems often talk about standards, but too often those standards remain abstract. Teachers, examiners and assessors are expected to align to a wider benchmark, yet in day-to-day practice they usually see only the work directly in front of them: their own class, their own cohort, their own centre. That gap matters.

Assessment Operations

Existing customer announcement

RM Assessment, who provides RM Compare, is moving to a new legal entity from 1 June 2026.

Is your software high quality, how do you know – and does it matter?

When important decisions, results or reputations depend on a piece of software, “quality” stops being a nice‑to‑have. It becomes the question of whether that system will be there when people need it, and whether it can adapt as your needs change.

Is RM Compare an Assessment Software Application or Infrastructure?

When people first encounter RM Compare, they usually see it as what our homepage says it is: the world‑leading Adaptive Comparative Judgement system. That description is accurate, but as customers start integrating RM Compare more deeply, a different question often emerges: is this just another application we use, or is it actually part of our assessment infrastructure?

Policy and Government Affairs

The OECD Just Mapped the Certification Problem. Here's the Solution.

A response to the OECD's The Theory and Practice of Upper Secondary Certification (2026). The OECD report has mapped the territory of the problem with exceptional care. The standardisation challenge is real, it is persistent, and it has defeated every country that has tried to solve it from within the existing paradigm. The solution is not a better mark scheme. It is a better question.

Thinking about AI (and other stuff)

Lessons from Software Engineering: Why AI in Assessment May Be Solving the Wrong Problem

Every few years, a body of evidence emerges from an unexpected direction that turns out to be exactly what education needed to hear. We think this might be one of those moments.

AI Assessment has a dignity problem - here's how to fix it

AI assessment has a dignity problem. Not a technology problem, or even just a fairness problem, but a problem with how it treats people at precisely the moments they are most exposed and most human.

Why AI Demands a New Architecture for Assessment (4 Part Series)

Generative and agentic AI are exposing the limits of assessment systems built for a more predictable, more mechanical world. In response, much of the assessment sector is treating AI as a way to mark faster, moderate more cheaply, and preserve the familiar exam–mark–grade machinery. This will not end well.

When AI Beats Economists – And Why That’s Good News For Assessment

Not so long ago, the idea that an AI system could out‑analyse a room full of economists would have sounded like science fiction. Yet that’s exactly what a recent Federal Reserve working paper set out to test.

Economist Charles Goodhart warned that “when a measure becomes a target, it ceases to be a good measure.” The paperclip AI is that warning turned into a toy: once “number of paperclips” becomes the only thing that counts, every other value is expendable. When I look at how AI is being introduced into education, especially assessment, I sometimes worry we are drifting towards our own paperclip machines.

Research Update

Using Comparative Judgement to keep exam grades fair when tests change (OFQUAL Research report 2025)

Every year, exams change. New papers are written, formats evolve, and sometimes whole qualifications are refreshed. Yet everyone from students, parents, teachers and universities still expects one simple promise to hold: a grade this year should mean the same as a grade last year. So what do you do then?

Time for Tea? Can AI spot a nice cuppa?

Just like everyone else in the UK we are getting very excited about National Tea Day which takes place on the 21st April. The day is a great opportunity to share tea brewing preferences, and the strength of the perfect 'cuppa' is always hotly debated. So we though it would be interesting to get the view of AI.

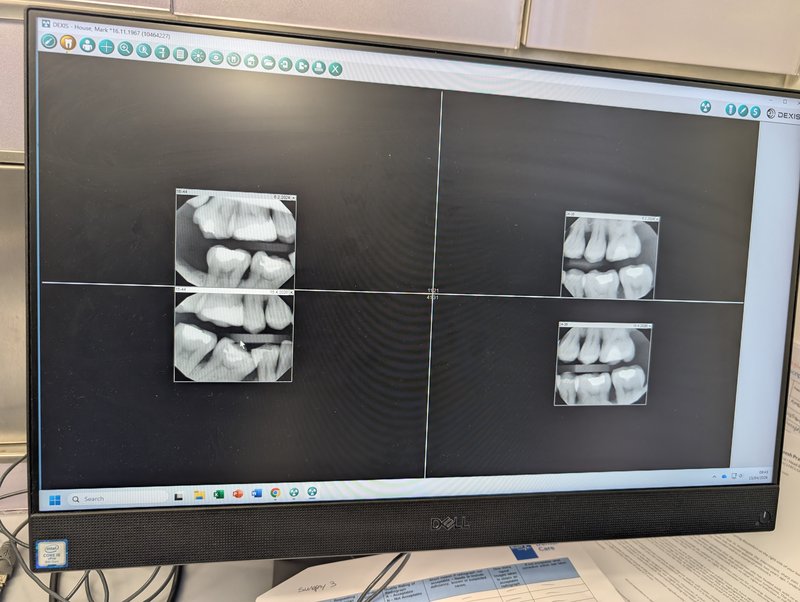

This morning I went for a routine dental check‑up. What struck me was how naturally my dentist fell into a comparative frame. He wasn’t mentally ticking boxes on a rubric. He was looking at two artefacts and asking, “Which represents a healthier, more stable situation?”

A Famous Exam Story With a Hidden Assessment Problem

The story of the Barometer Question is usually told as a joke at the expense of an inflexible examiner. But if you look at it through an assessment lens, it is really a story about design failure, construct clarity, and the importance of a strong holistic statement of quality.